If you’re looking for a watch that does more than just tell time, you’ll want to check out the latest crop of the best smartwatches on the market. We’ve rounded up our top picks to let you know which of these versatile wearables tick all the right boxes, as well as telling time. From fitness trackers to smartwatches with traditional watch-like styling, here are the best smartwatches you can buy. Each of these smartwatches on our list offers, at a minimum, GPS capability, a basic fitness tracker function, the ability to synch with your smartphone and show text and other notifications, and all-day battery life.

Our top pick, the Samsung Gear S3 Frontier Smart, is compatible with both iOS and Android mobile devices, has classic watch style and comes with a battery that lasts up to three days, far longer than most smartwatches.

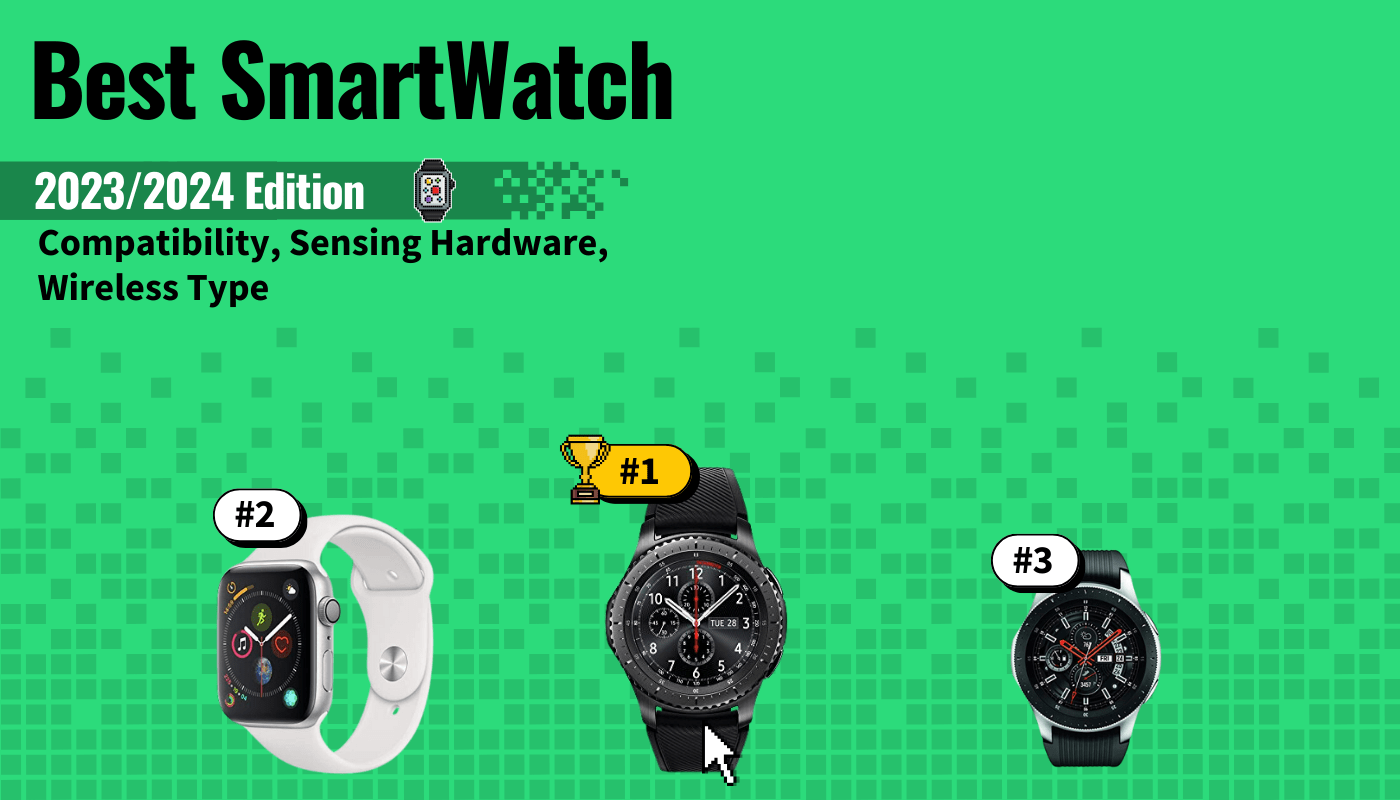

Top 7 Best Smartwatches Compared

#1 Samsung Gear S3 Frontier Smartwatch

Award: Top Pick

WHY WE LIKE IT: Versatile smart watch with GPS, Bluetooth and fitness tracker functions that works with both iOS and Android mobile devices, plus a long battery life and distinctive styling. It’s great for those who want the convenience of smartwatch functionality but prefer a more classic watch face and bezel appearance.

- Compatible with both iPhone and Android

- 3 day battery life

- IP68 waterproof and dust proof

- Samsung pay function only works with Android phones and certain cards

- Not as easy to access apps as with the Apple Watch 4 (our #2 pick)

- Fewer specialized fitness tracking features than the Apple Watch or Fitbit

This Android and iOS compatible smartwatch stands out for its handsome steel bezel which you rotate in order to toggle between apps. Some may prefer this more physical interface to the purely touch-screen smartwatches like the Apple Watch (our #2 pick.) The Gear S3 Frontier Smart is also a better choice for those who value traditional watch styling, and it displays a handsome clock face as its default screen.

It’s IP68-rated for water and dust protection, meaning if a little water gets on it, it should be fine, but it’s not meant for prolonged swimming or water sports use.

It does come with GPS, a heart rate and steps tracker, and a very clear, high resolution display screen. The GPS lets you store maps offline as well, a handy feature. Like with the Apple Watch, it has a step counter and heart rate tracking, though it lacks the Apple Watch’s heart rhythm/ 1-lead ECG feature. Where the Gear S3 does win out however, is in terms of battery life, as its battery lasts up to three days on a charge.

#2 Apple Watch Series 4

Award: Honorable Mention

WHY WE LIKE IT: The best smart watch for iPhone users, this update of the Apple Watch comes with a 64-bit processor and Apple’s proactive health monitor, as well as a wide range of watch OS apps. It’s great for iPhone users looking to add health and fitness tracking with a GPS capable smart wearable. If you need a watch for monitoring your health while you workout on terrain, then check out our best rugged smartwatch.

- Widest selection of apps

- Virtual ECG, fall detection, step and mile counters

- WiFi and Bluetooth standard

- Cellular is a pricey option

- Shorter battery life, rated 18 hours, than the Samsung Gear S3, our top pick

- Few physical controls

The Apple Watch 4 is the go-to smart watch for iPhone users. It has the most apps available so far of any smart watch and it’s also the only smartwatch that’s designed specifically (and exclusively) for pairing with an iOS mobile device. For those looking to use their smartwatch as a fitness tracker, the Apple Watch 4 offers some stand out features like the virtual ECG, which claims to be able to spot atrial fibrillation, as well as sending you .pdfs that approximate 1-lead of an electrocardiogram.

The Apple Watch 4 also has GPS and a sophisticated built-in accelerometer.

It supports calling, when paired with a phone or if you pay the significant extra costs of upgrading to the cellular model. It supports text, when paired with your mobile device, with a voice to text feature. The Apple Watch 4 charges fully in about two hours with its magnetic charger, but its 18-hour battery life is shorter than that of the Samsung Gear S3.

If you’re in the market for an Apple Watch, also check out the Apple Watch Series 5 and Apple Watch Series 3. The Series 5 is a sleeker version of the Apple Watch Series 3. Top Picks for women include the Fitbit Versa, the Samsung Gear Sport, and more.

#3 Letscom Fitness Tracker Smartwatch

We’re sorry, this product is temporarily out of stock

Award: Best Budget Fitness Tracker

WHY WE LIKE IT: A bargain full featured fitness tracker with a claimed 7-10 day battery life and iOS as well as Android phone compatibility, with sleep and HR tracking, activity reminders, wrist sense and call and SMS alerts. It’s great for those looking for a smart watch mainly as a fitness accessory with helpful reminders to get moving.

- Sleep tracking and silent alarm clock

- Bright, .96-inch OLED screen with customizable faces

- Up to 10 day battery life

- Fewer 3rd party apps available than with Samsung or Apple

- Not as water or dust proof as the pricier Samsung Gear S3

- No navigation

This bargain Fitbit alternative has some distinct advantages for those looking for a smart watch mainly as an exercise/ fitness tracker. It offers activity tracking with customizable reminders, an accurate step counter and exercise time tracker and even a calorie counting feature. It also has a suite of sleep tracking functions and you can use it to set a clever silent alarm for reminders.

It’s IP67 water resistant, as opposed to the more rugged IP68 waterproof Samsung Gear S3, so it’s not suited for keeping on while showering or washing your hands. It also lacks of course the range of apps available for Apple Watch and Samsung devices. It does, however, serve admirably as a functional and budget-friendly alternative to a fitness tracker like a Fitbit.

#4 Samsung Galaxy Smartwatch

Award: Fastest Processor

WHY WE LIKE IT: A 46mm bezel with 1.3-inch Gorilla Glass display, the largest on our list, plus extensively tested durability including a 50m waterproof rating, it’s among the highest performing smart watches on the market. It’s great for those looking for a durable and multifunctional smartwatch to take swimming, hiking, cycling or for daily use.

- Waterproof to 50m

- Voice integration lets you take calls and respond to texts

- Compatible with both iPhone and Android

- Not cellular enabled; must be paired with a mobile phone for full functionality

- More expensive than the Gear S3

- Not as many 3rd party apps as for Apple Watch

The Samsung Galaxy Smart Watch has some of the most impressive specifications of any smartwatch on the market today. It offers the usual features of GPS, Bluetooth and WiFi phone pairing, heart rate and step tracking, plus it lets you take calls via an integrated speaker and microphone and respond to SMS texts via voice-to-text. As with the Samsung Gear S3, this smartwatch has a markedly different aesthetic than the Apple Watch; for those who prefer a more traditional watch face design, the Samsung may be preferable. It also lets you customize the watch face.

The Galaxy smartwatch also stands out for its tested durability claims. It comes with a Gorilla Glass screen for scratch protection and is rated for submersion in water up to 50 meters deep, making it one of the few smartwatches and fitness trackers that are suitable for swimming laps as well as counting your steps.

Another Galaxy Smartwatch worth checking out is the Galaxy Watch Active 2. The Galaxy Watch Active 2 is advertised as the “smartwatch that tracks your way to wellness.” The Watch Active 2 is designed to keep you motivated. It’s light and powerful and with Active 2’s 4G connection to EE and Vodafone, you’re free to leave your smartphone at home during walks, jogging, or any outdoor activity. The Watch Active 2 is water-resistant, offers heart rate tracking and battery life that lasts all day.

#5 Garmin Vivoactive 3 Smartwatch

Award: Best Travel

WHY WE LIKE IT: A sports-focused water resistant smart watch with pre-loaded GPS features and up to a 7 day battery life. It’s great for long-distance runners and other outdoor enthusiasts, with its Garmin chroma display promising easy legibility even in direct sunlight.

- Estimates VO2 Max and HRV

- GPS mode available even without pairing a phone

- Supports Garmin mobile pay

- Garmin OS may be less familiar than Samsung or Apple

- Battery life falls to 13 hours in GPS mode

- No voice calling feature

This smart watch from Garmin, a company perhaps best known for GPS-enabled car navigation devices, makes good use of GPS technology while offering some features that should appeal to more serious workout and fitness enthusiasts. It comes with 15 indoor sports and GPS apps pre-loaded, and it does the usual heart rate monitoring but it also shows you heart rate variability, a useful fitness measure in itself, and it claims to be able to estimate VO2 max and “fitness age.”

While the display is easy to read, it may lack some of the aesthetic pizazz of the Samsung Galaxy watch (our #4 pick) or the Apple Watch. While the Garmin Vivoactive may not have a wide range of supported 3rd party apps, it does offer Garmin’s mobile wallet feature and it also lets you use the GPS function without needing to be constantly paired with a smartphone. It’s compatible with iOS and Android devices for sharing fitness data, playing music and for notifications of calls and texts.

#6 Yamay 020 Fitness Tracker Watch

We’re sorry, this product is temporarily out of stock

Award: Budget Waterproof

WHY WE LIKE IT: 7-10 day battery life and IP68 waterproofing, plus a wide range of features on this VeryFitPro compatible multipurpose smartwatch and fitness tracker. It’s great for those looking for a smartwatch to take on vacation or to the gym or pool.

- Works with VeryFitPro

- Sleep tracking supported

- iPhone and Android compatible

- Must be connected to phone for GPS function to work

- Limited to syncing through VeryFitPro app

- Durability not as proven or tested as with better-known products from Samsung, Garmin or Apple

This low priced smartwatch and fitness tracker is primality designed to work through the VeryFitPro app. It’s compatible with both iPhone and Android phones and when paired via Bluetooth it shows GPS features and supports text, Facebook and What’s App notifications. It also includes 14 sports activity modes for tracking different kinds of workouts and it claims to do sleep tracking as well.

#7 321OU Smartwatch

Award: Best Budget

WHY WE LIKE IT: One of the least expensive smart watches on the market, gives you a travel friendly way of keeping up your fitness tracking, monitoring phone notifications and even controlling the music on your phone. It’s great for travelers and people who want to try out the smart watch thing without making the commitment to invest in an Apple Watch or Samsung watch, which can cost up to ten times more than this 321OU Smart Watch.

- Works with iOS and Android

- Push notifications, music and activity tracking

- Sleep tracker and calorie estimator

- Requires an SD card sold separately for image viewing, music and some fitness tracking features

- No SMS or camera remote functionality with iPhones

- 24-hour battery life is somewhat limited compared to the 7 days of the Garmin (our #5 pick)

This budget smart watch is a useful fitness and workout tracking tool and serves as a great “starter” smart watch, especially if you’re traveling and may not want to be wearing an extra, expensive item. It syncs with most IOS and Android devices over a Bluetooth 3.0 connection and there’s even a model of the 321OU that gives you the option to add a SIM card and use it as a 2.5G Android phone, assuming carrier compatibility.

However, it’s more limited when it comes to iPhone functions, as unlike other non-apple brand smart watches like the Garmin or the Samsung (our #5 and #4 picks, respectively ) it doesn’t support SMS notifications for iPhones. It also requires you to buy an SD card if you want to use functions like sound recording and image viewing. On the plus side, this smart watch is actually less expensive than some SD cards.

How We Decided

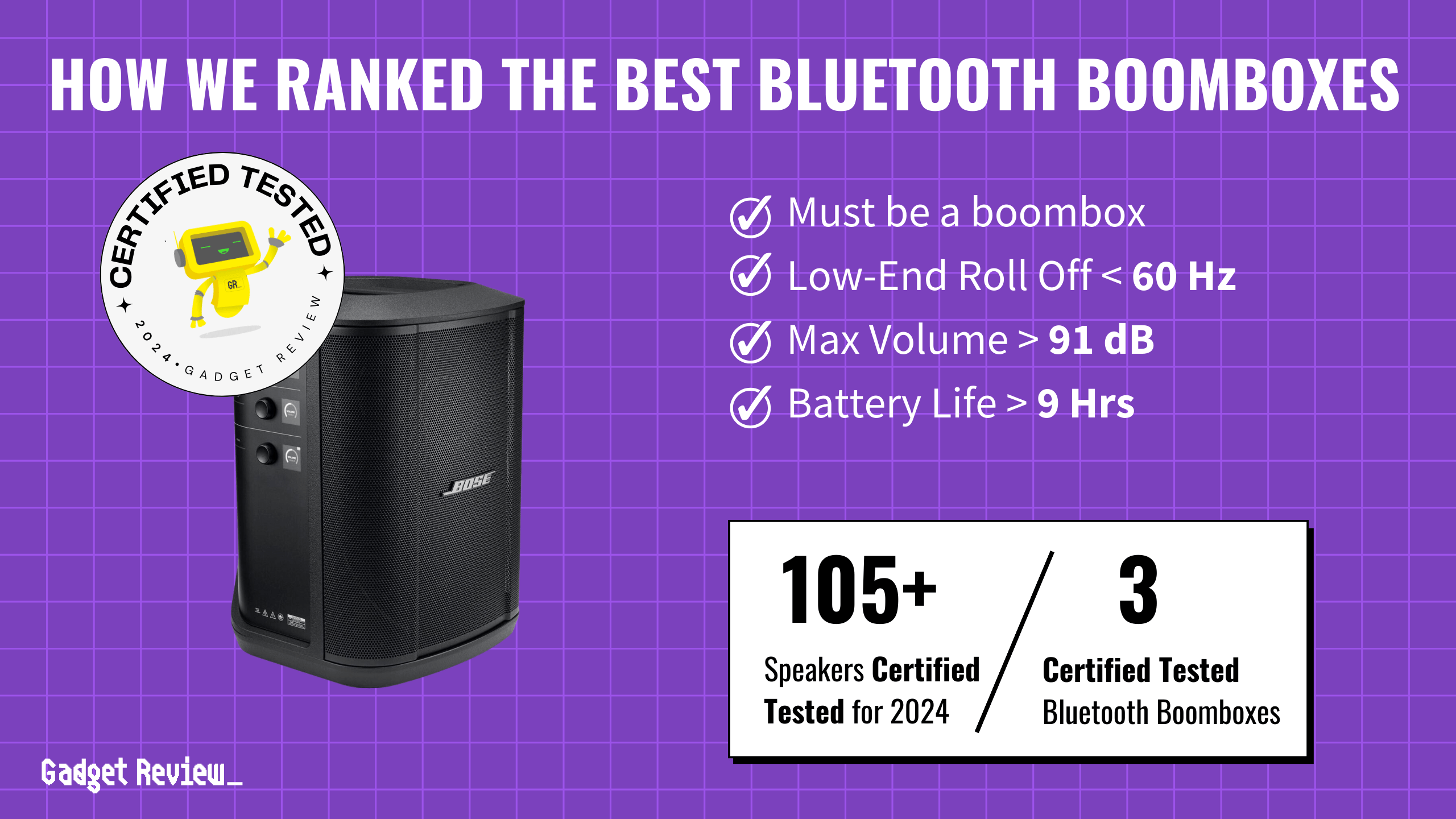

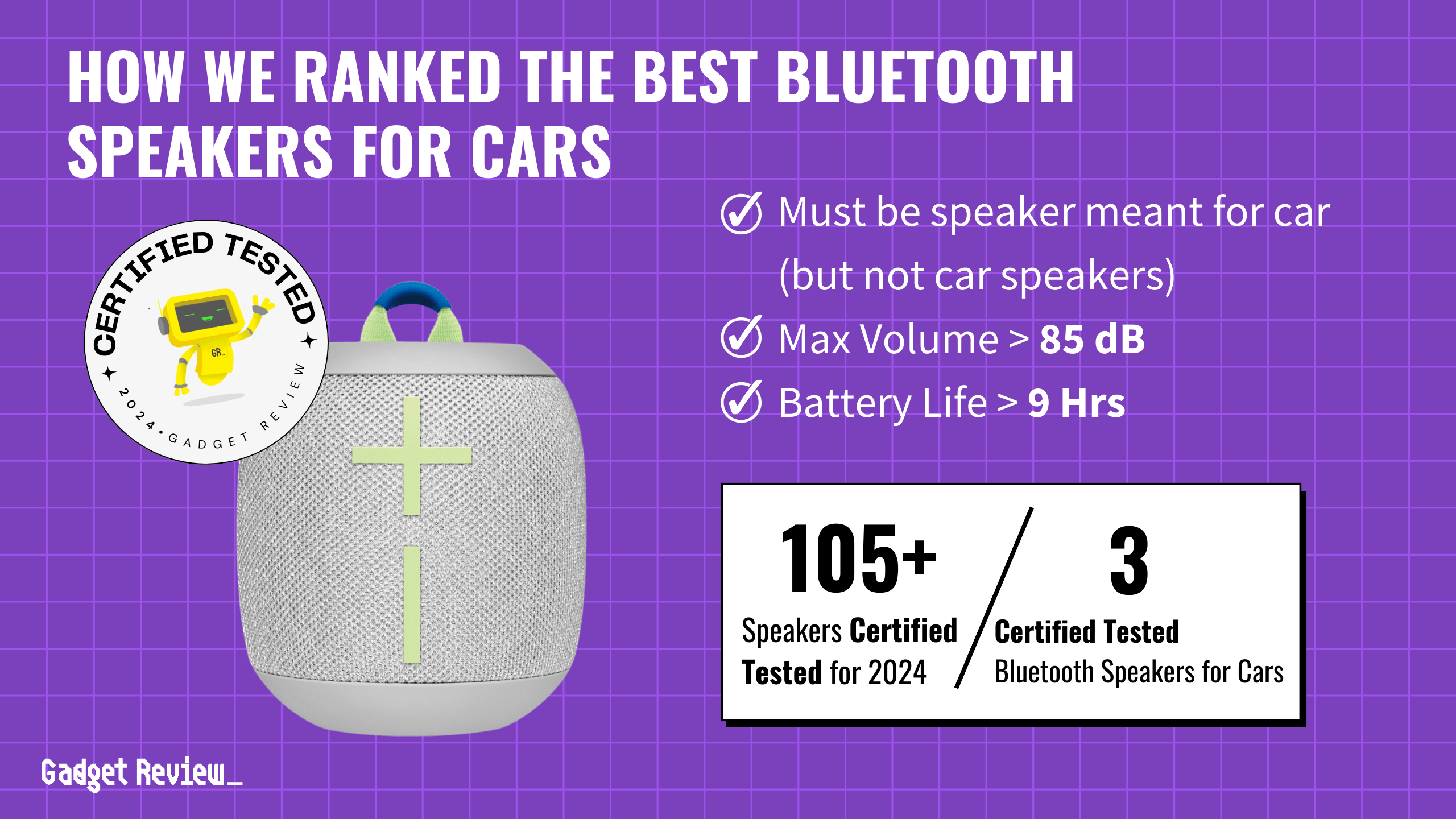

We narrowed our search down to smart watches that offered GPS capability, a basic fitness tracker function, the ability to sync with your smartphone and show text and other notifications, and all-day battery life.

Since compatibility is one of the main factors to consider when choosing a smart watch, we included only models that promised compatibility with iOS, Android or both. We also eliminated models that didn’t have a GPS function and those which required a monthly service fee–although it’s possible to get cell service on some of the watches on our list, such as the Apple Watch 4, it’s optional and not required.

Finally, we selected smart watches that promised at least 10 hours of battery life.

Other smartwatches for android users to consider include the TicWatch Pro Premium Wear OS Android Smartwatch, the Fitbit Versa 2 Health and Fitness Android Smartwatch, and the Fossil Gen 5 Carlyle Stainless Steel Android Smartwatch. Other Wear OS watches that just missed our list were the Skagen Falster 2 and the Huawei Watch 2. But make sure you focus on their specs and design as this is where the differences between Wear OS models are.

Smart Watches Buyer’s Guide

The Most Important Features to Consider

- Compatibility

The key to smart watch functionality and convenience is whether the watch smoothly and reliably syncs with the relevant apps on your mobile device. This means that for best results you should check carefully to see if all the watch’s functions are supported with whatever kind of device you have. Sometimes it’s obvious as in the case of the Apple Watch which predictably is only compatible with iPhones. Other brands advertise wider compatibility: the Samsung Galaxy watch (our #4 pick) syncs with both iOS and Android mobile phones, as does the Garmin Vivoactive, and the relevant apps are available through the App Store or Google Play. - Sensing Hardware

Smart watches vary in the number and quality of their sensor arrays, but at the very least in order to be functional as a fitness tracker or activity monitor and navigation assistant, the best smart watches have GPS, an accelerometer and some kind of light or vibration sensor that enables them to accurately measure heart rate. Some add more fitness features like barometers, as with the Apple Watch 4 (our #3 pick) or the Samsung Galaxy (our #4 pick.) The Apple Watch is a bit ahead in this department with its virtual ECG. - Wireless Type

Smart Watches can pair with your mobile device using bluetooth or WiFi, or they can receive independent GPS signals; some are also eligible for cellular service, as with the Apple Watch or the Samsung Gear S3. However, as this costs extra and typically involves a separate monthly charger from your mobile service, we’ve focused on the intrinsic cellular connectivity features of the smart watches themselves.