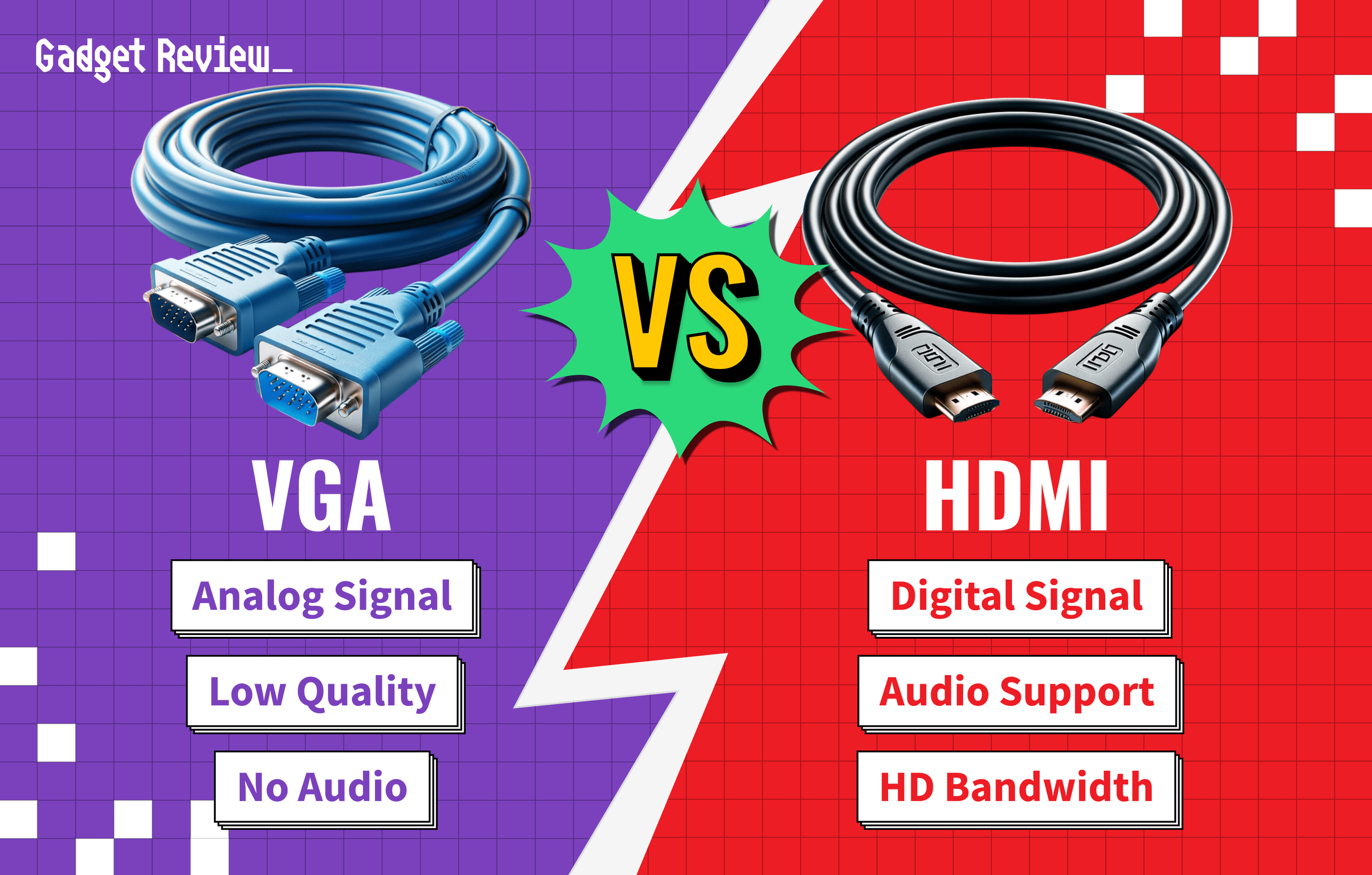

If you are shopping for another display to add to your computing arsenal, you may be comparing computer monitors with VGA vs HDMI ports. The best computer monitors, after all, tend to include a number of useful connection ports. Once you know what VGA is along with HDMI, you might be wondering what are the differences between a VGA port and HDMI? Keep reading to find out.

Key Takeaways_

- HDMI cables deliver both audio and video, whereas VGA cables can only handle video.

- HDMI is preferred in nearly every scenario, but VGA can offer slightly lower input lag.

- VGA cables are susceptible to interference and crosstalk from nearby cables and devices.

Differences Between VGA and HDMI

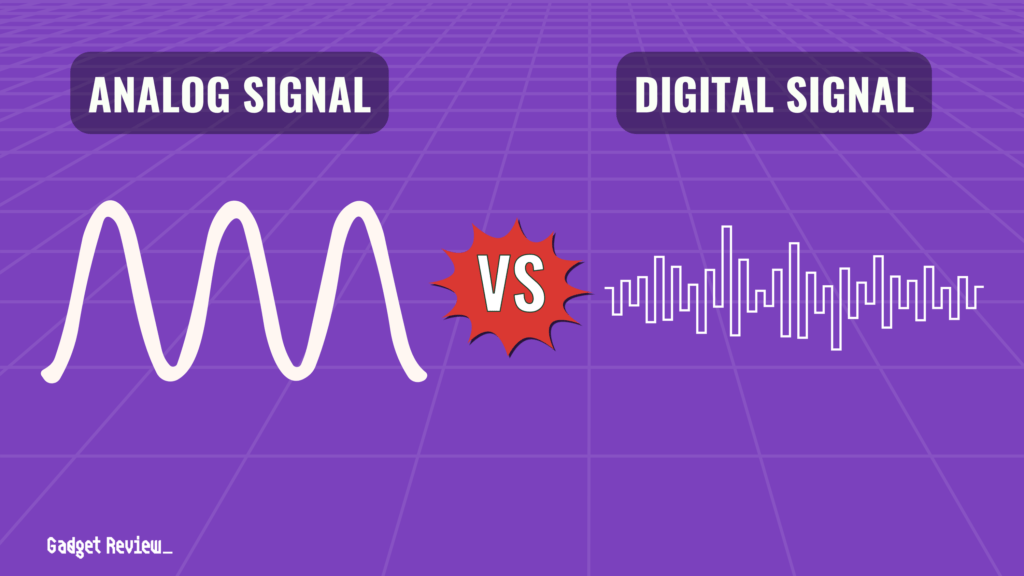

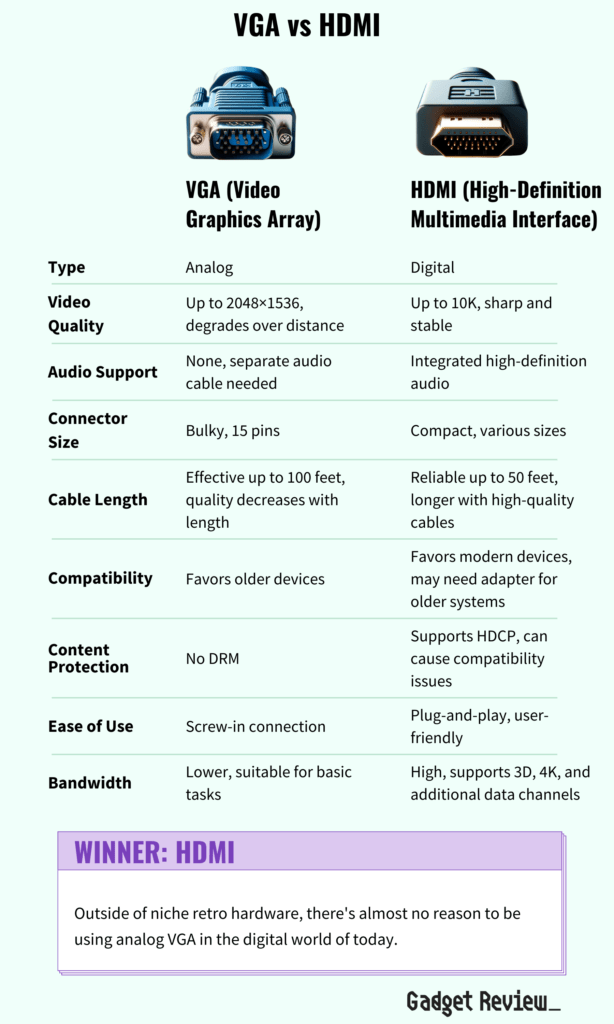

The primary difference between the two is that VGA (video graphics array) is an older connection standard, delivering analog signals with variable value, and HDMI is a newer standard that delivers digital signals—in other words, only either 0s or 1s. Each has pros and cons, but generally speaking, HDMI is preferred in the modern era; we’ll get into why that is further down the page.

insider tip

You can usually find used VGA cables for cheap at yard sales, thrift stores, or online.

Sound

VGA is an old-school connection type and, as such, only delivers a video signal to the monitor from a computer, gaming console, DVD player, or related device. HDMI is a technology that carries both video and audio signals, saving you the hassle of having to connect a dedicated audio cable.

Modern HDMI cables are one of the reasons why setting up gaming consoles is so easy. You only need to connect the display through to that single HDMI cable to pass along everything you need.

Specs

Given that HDMI cables are much newer and fancier than the ancient VGA cable, you can expect an increase in nearly every specification, including image quality for better movie or gaming experience. HDMI cables have greater bandwidth, meaning they can transmit more data in the same amount of time.

There is, however, one area in which VGA may have the edge: because it’s an analog signal with no need to decode or otherwise process, VGA can sometimes result in lower input lag than with HDMI inputs. However, the differences—if any—are slim, and you’re unlikely to notice them in normal usage.

STAT: Although the connectors look the same, the HDMI standard itself has received revisions over the years. HDMI 2.1, first released in 2017, is the newest revision, and it is required to transmit high-bandwidth signals such as 4k@120hz or 8k video.

Interference

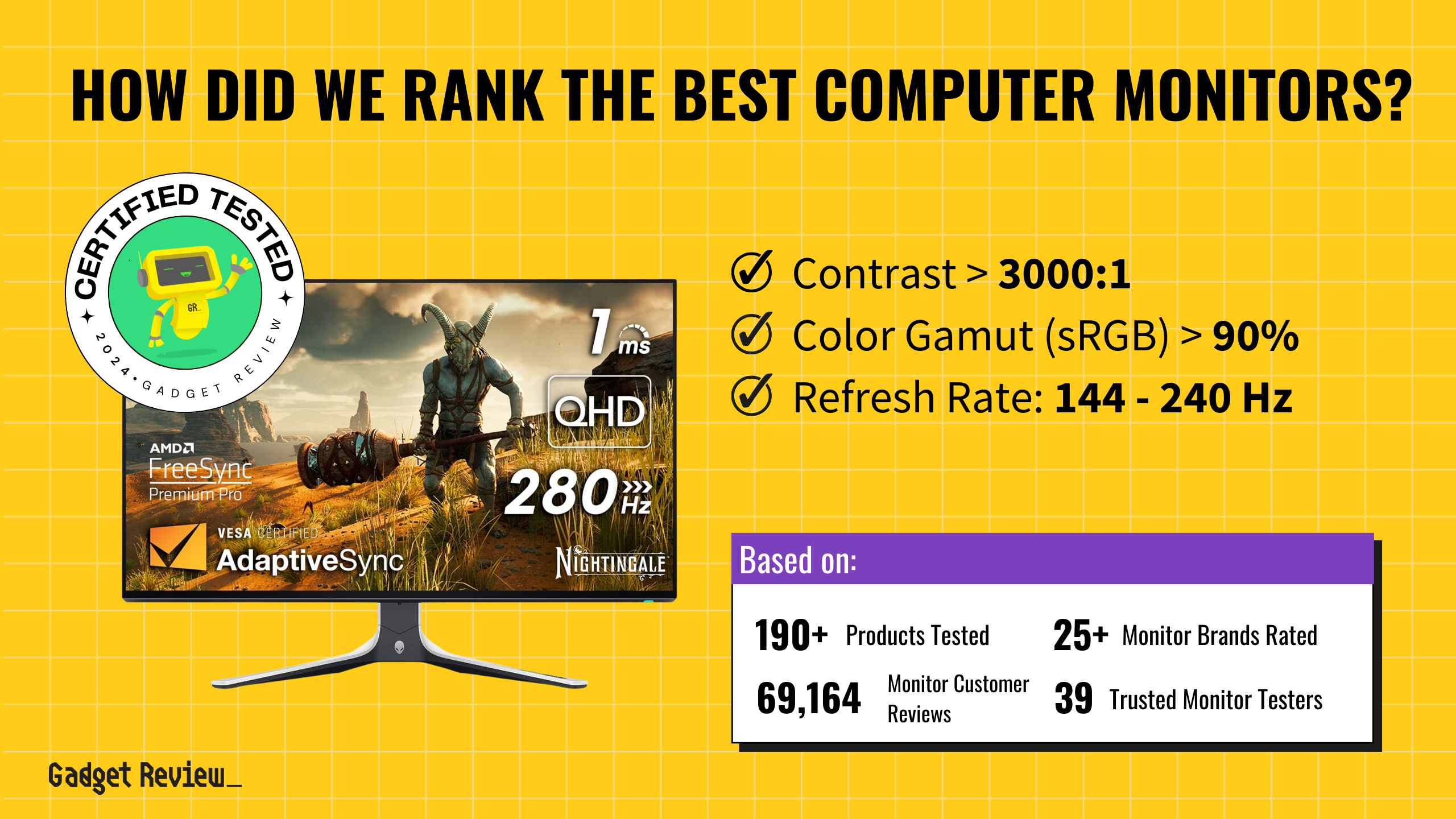

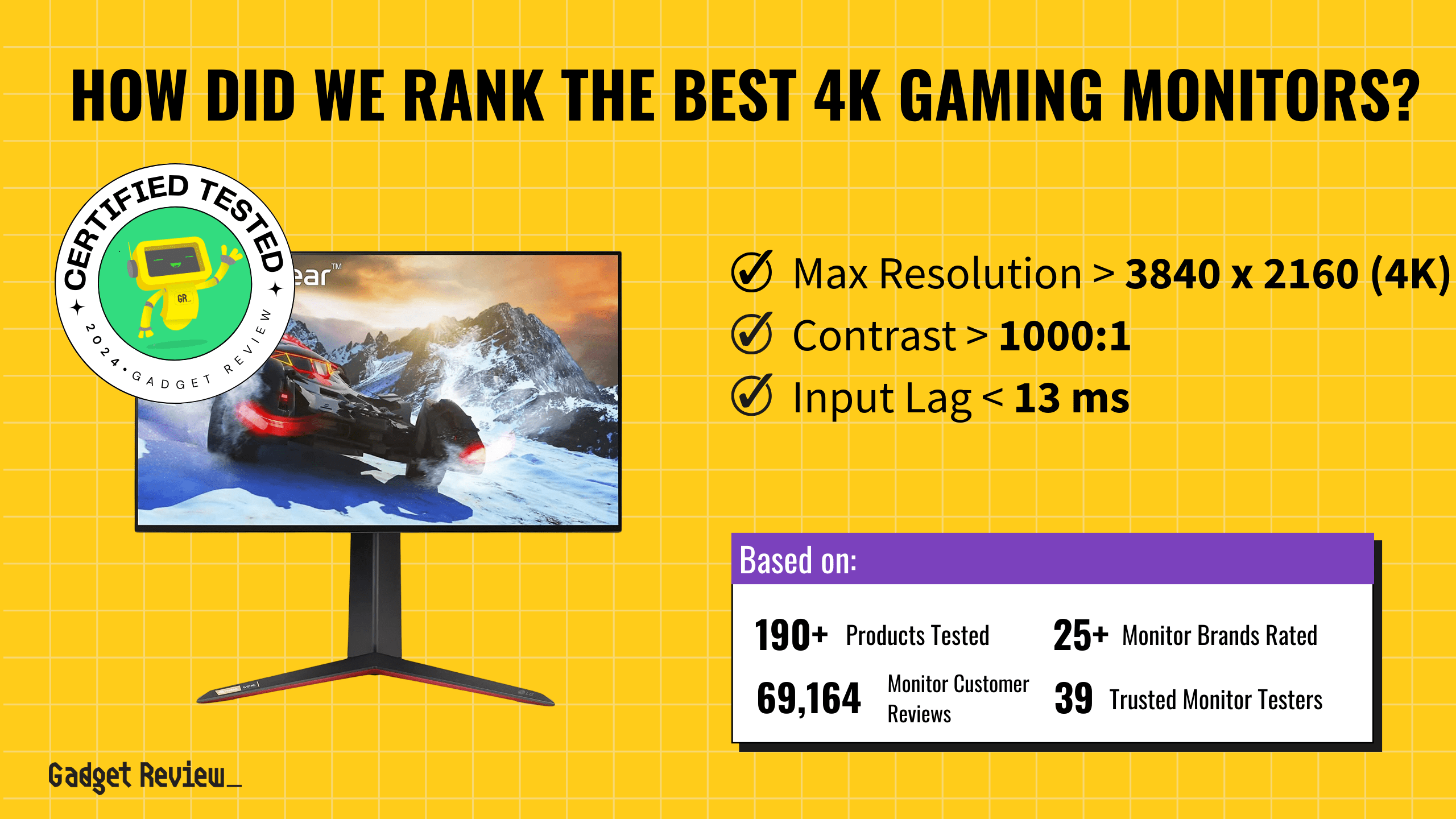

Optimizing Your Display Setup

If you’re deciding between a monitor or a TV for your gaming setup, our comprehensive guide on monitor vs. TV will help you make an informed choice. Additionally, understanding the nuances of HDMI vs. DVI for gaming can significantly enhance your experience by ensuring you’re using the best connection for your needs.

To keep your setup tidy, don’t miss our top recommendations for cable management solutions, which are essential for creating a clutter-free environment. Lastly, adding a touch of flair with the best RGB strips for PC can make your setup visually appealing and immersive.

Because they’re analog signals, VGA cables are subject to crosstalk and interference with other cables and devices, which can result in an unsteady, blurry, or staticky image. The same is not true of digital connectors like HDMI: assuming the cable hasn’t been damaged somehow, the signal will reach the display exactly as intended no matter what.

Compatibility

The VGA standard was invented in 1987. While it had a good, long life, it’s far beyond “old news” by now. As a result, you’re likely to require an adapter to connect anything VGA to a modern display. However, if you’re using older gear, you may find that a VGA cable is a perfect fit.

warning

If you encounter issues such as a flickering monitor, it’s important to address them promptly. Learn how to fix a flickering monitor while gaming to maintain optimal performance and avoid disruptions during critical moments in your gaming sessions.

These cables are the de facto standard for retro computing, noteworthy for fans of old-school CRT monitors. HDMI-to-VGA converter cables are fairly commonplace, so finding one shouldn’t be an issue, but it adds a further expense and another point of failure to your video setup.