When it comes to modern gaming monitors, there is plenty of lingo that the average consumer may not understand. For instance, you may have encountered the term FreeSync. What does FreeSync mean and is FreeSync worth it? Keep reading to learn all about it.

Key Takeaways_

- FreeSync is an adaptive sync technology developed by industry leader AMD, the same company that manufactures high-end GPUs.

- FreeSync creates a variable refresh rate between the GPU and the monitor, reducing screen-tearing and stuttering.

- Generally speaking, FreeSync-enabled monitors are a bit cheaper than G-Sync monitors.

What is AMD FreeSync?

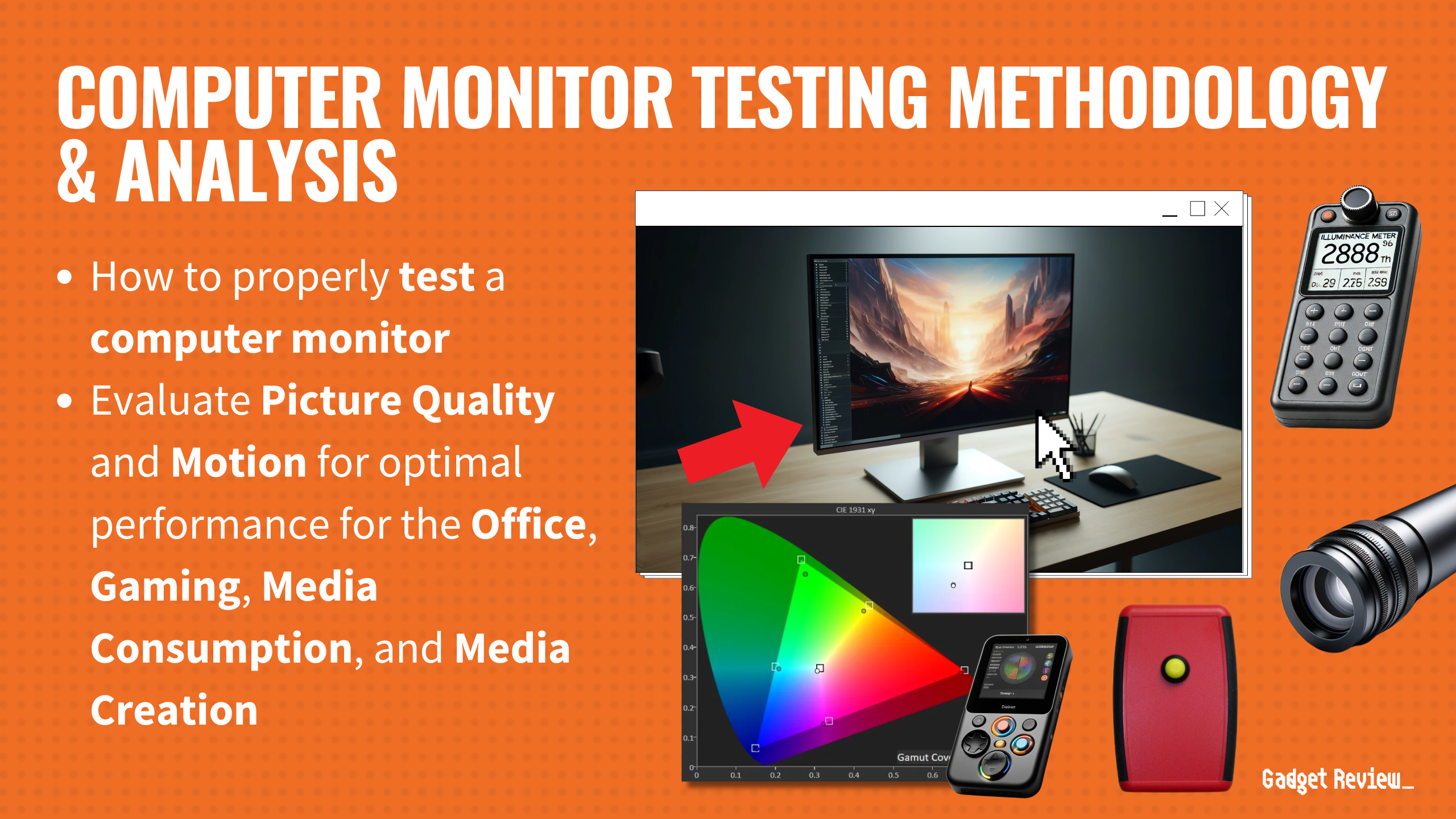

In order to figure out if FreeSync is worth it, you will have to understand exactly what it is and what it does, especially when you’re learning to set up your new gaming monitor.

FreeSync is an adaptive sync technology developed by industry giant AMD. It works to regulate the refresh rate between a computer’s GPU and the monitor.

For gamers, the smooth gaming experience offered by FreeSync is a significant advantage, especially in fast-paced games where quick response times are crucial.

insider tip

Adaptive sync ensures that the GPU’s refresh rate is constantly adapting to the predetermined refresh rate of the monitor.

Moreover, FreeSync significantly enhances gaming performance by synchronizing the frame rate output of the GPU with the refresh rate of the monitor, leading to smoother visuals.

Who Needs Adaptive-Sync Technology?

Nearly everyone can benefit from some form of adaptive sync technology.

Why? Computer monitors are extraordinarily stable when it comes to their refresh rate. GPUs, on the other hand, tend to be rather erratic as they react to the needs of the CPU and the demands of the gaming application.

The GPU will vary the refresh rate sent to the monitor, but the monitor will only be equipped to deal with a single predetermined refresh rate.

The end result? You will witness screen-tearing as you game, which is when the monitor shows two different images at once. At that point, you might wonder if a gaming monitor is worth it.

FreeSync technology is compatible with a wide range of graphics cards, enhancing its accessibility to a broader range of users.

How Does Adaptive-Sync Tech Work?

There are a few different ways in which adaptive-sync technologies work, but they all share certain commonalities.

Generally speaking, adaptive sync makes sure that the refresh rate of the GPU is constantly adapting to the predetermined refresh rate of the monitor. That is exactly what G-Sync also does.

insider tip

FreeSync monitors create a variable refresh rate that changes when needed to match the GPU and CPU demands.

When setting up dual monitors for gaming, it’s beneficial to have both monitors equipped with FreeSync technology to ensure uniform performance and visual quality across both screens.

Benefits of FreeSync

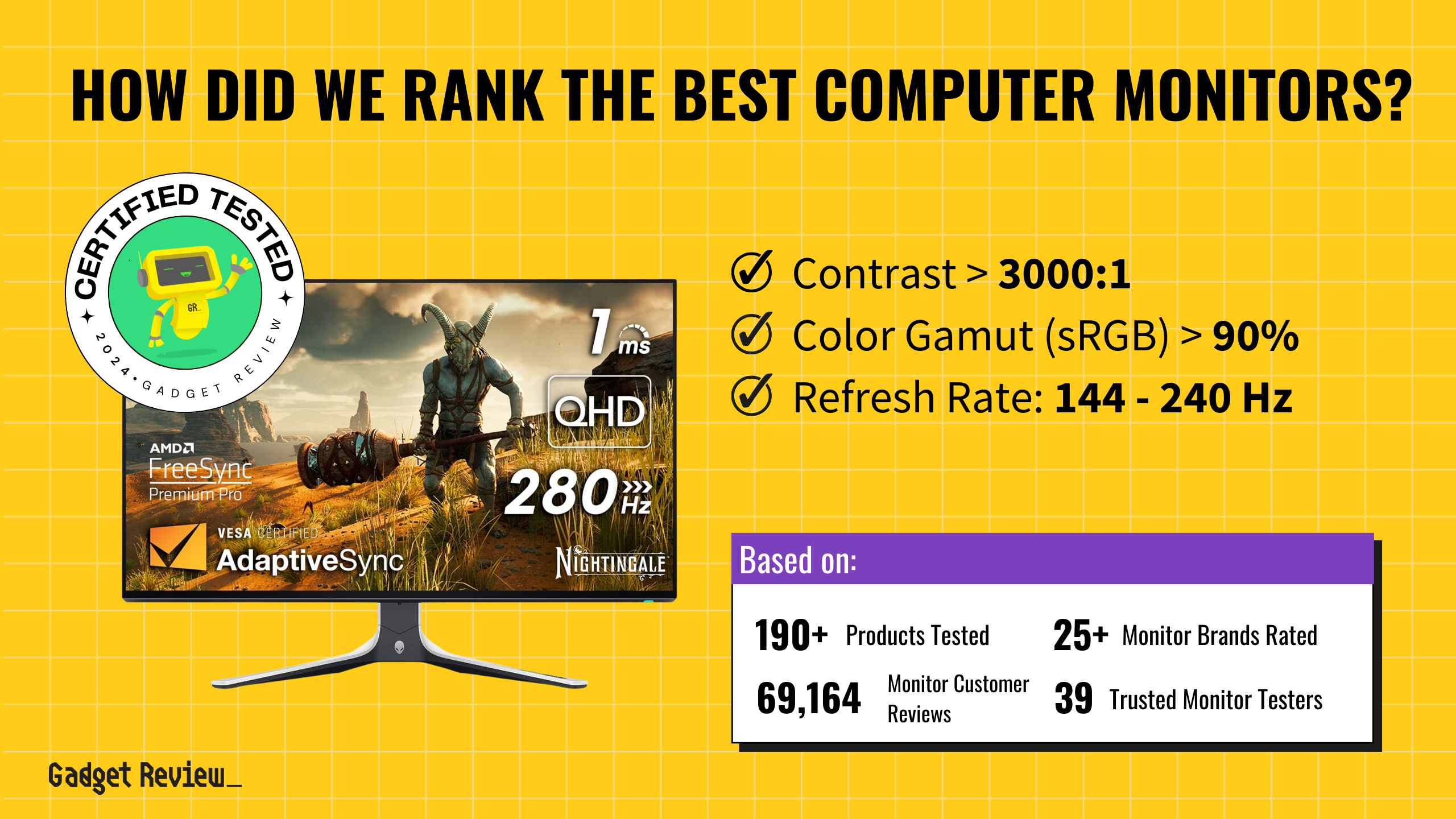

There is a reason AMD’s FreeSync is one of the two major players on the block, as it offers certain benefits. FreeSync operates over both HDMI and DisplayPort, offering flexibility in terms of connectivity.

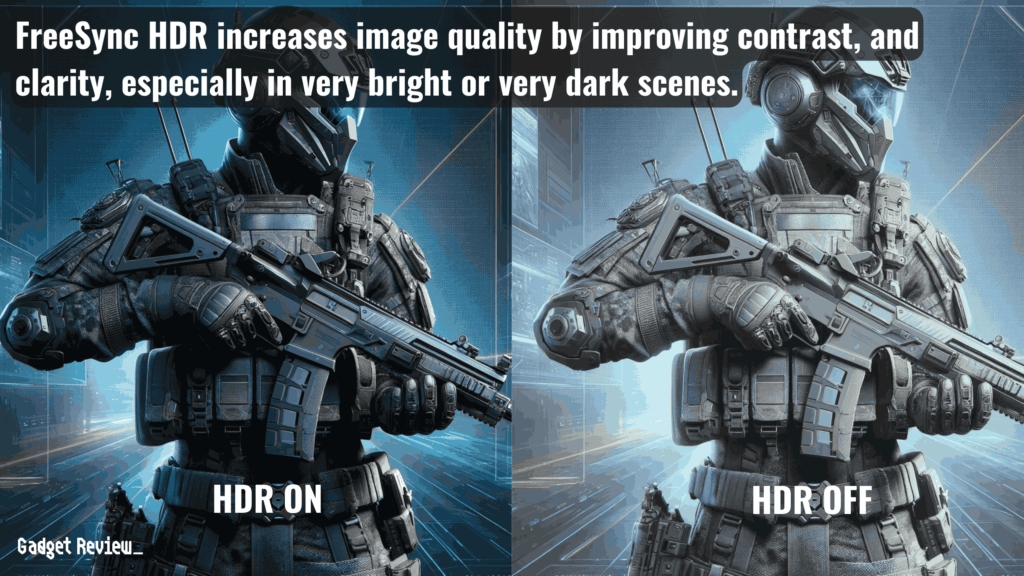

While FreeSync and FreeSync Premium offer Low Framerate Compensation (LFC), FreeSync Premium Pro offers support for AMD FreeSyncHDR (High Dynamic Range) content, enhancing the visual experience in games with a limited range of frame rates.

Additionally, the latest version of FreeSync technology continues to evolve, offering even better performance and compatibility with a wider range of displays and graphics cards.

Reduced Screen-Tearing and Stuttering

FreeSync’s intended job is to reduce or eliminate screen-tearing and screen stuttering. In other words, if you have a FreeSync-enabled gaming monitor, these two issues should become relics of the past.

FreeSync monitors create a variable refresh rate that changes when needed to match the demands of the GPU and the CPU.

For those with high refresh monitors, FreeSync helps maintain consistent frame rates, reducing visual distortions and improving response times.

Budget-Friendly

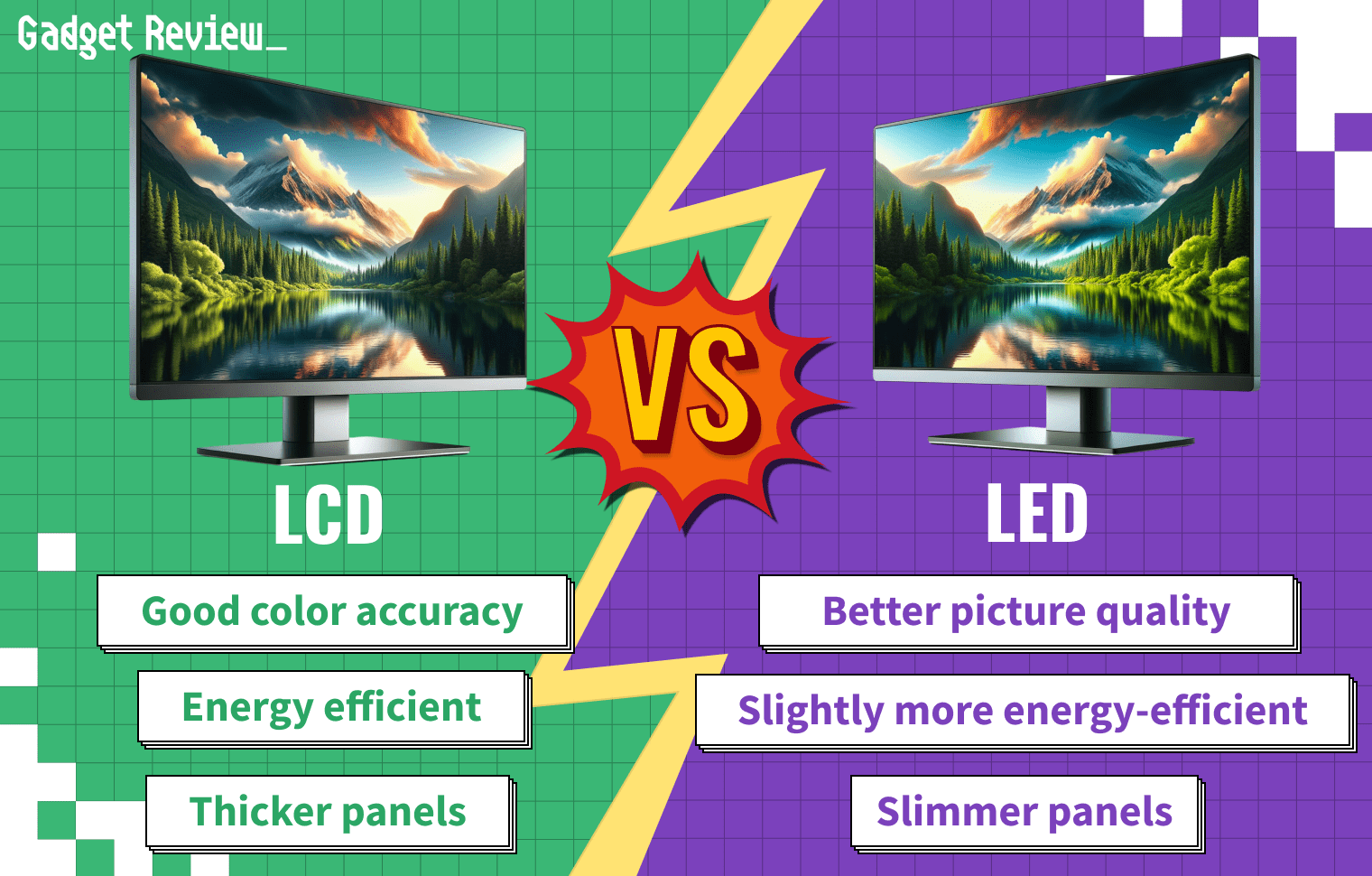

FreeSync monitors tend to be cheaper than NVIDIA G-Sync monitors. NVIDIA is extremely selective and hands-on when it comes to the implementation of its proprietary hardware.

FreeSync’s standard configuration is designed to work seamlessly with compatible graphics cards, ensuring a smooth gameplay experience without the need for additional hardware like a G-Sync module.

STAT: There are FreeSync (as well as G-Sync) displays that operate at 30 Hz or, according to AMD, even lower. (source)

AMD is a bit more lenient, so there are many more FreeSync-enabled displays on the market than G-Sync displays. This leads to competition, which leads to lower prices.

FreeSync’s compatibility with VESA’s Adaptive-Sync technology ensures a wider range of compatible monitors, further enhancing its appeal.

In terms of software, adjusting settings in-game to match the monitor’s capabilities can further optimize the experience.