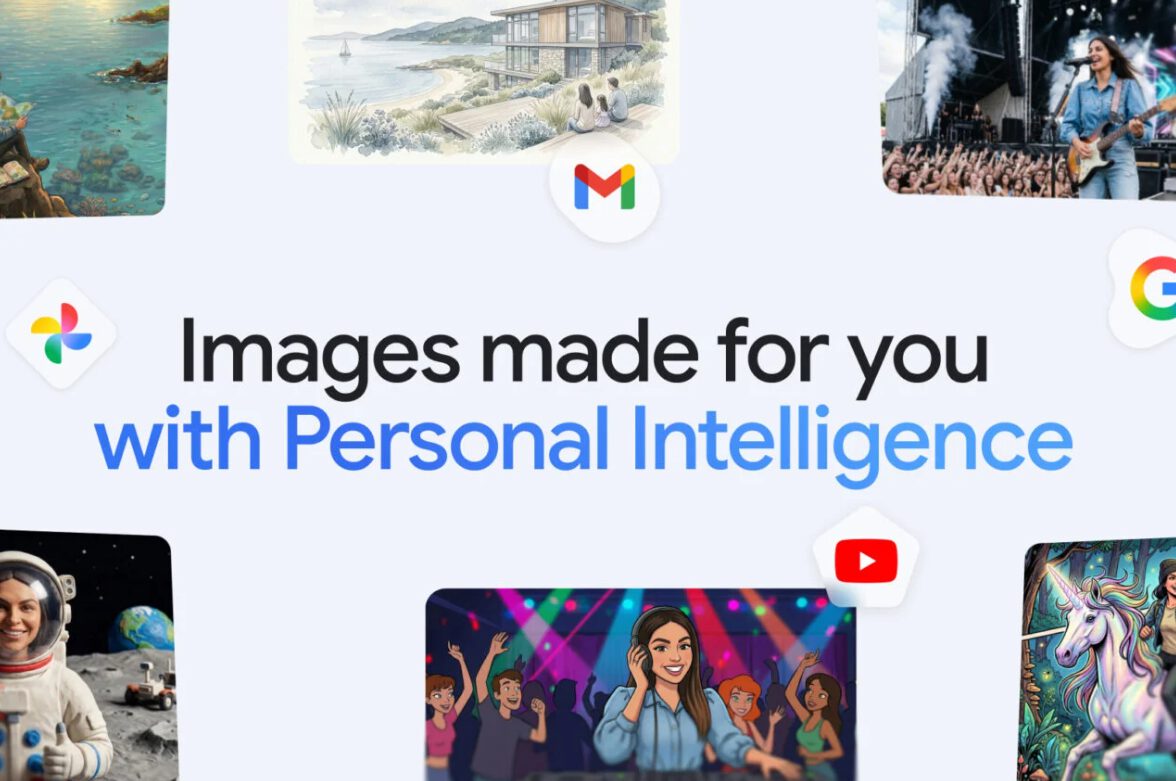

Your personal photos just became training wheels for Google’s image AI. The tech giant quietly expanded its Personal Intelligence feature this month, letting Gemini automatically raid your Gmail, Google Photos, YouTube history, and search data to generate personalized images. No more uploading reference photos or crafting detailed prompts—just ask for “my dream vacation house” and watch the AI assemble a vision based on your digital breadcrumbs.

When Simple Prompts Meet Complex Data Mining

Google’s Nano Banana 2 now scours your digital life to fill creative gaps automatically.

Racing to visualize that family portrait idea? Gemini now eliminates the guesswork by analyzing face recognition data, location patterns, and activity preferences from your photo library. The system focuses on images from the past 12 months that you haven’t recently accessed but that metadata suggests represent significant moments.

Instead of describing “three people in hiking gear at a mountain lake,” you simply type “claymation image of my family doing our favorite activity” and let the AI connect the dots. This represents a fundamental shift from generic AI tools that treat every user identically. Personal Intelligence transforms creative workflows by making AI assistance genuinely personal—though whether that feels helpful or invasive depends entirely on your comfort with algorithmic intimacy.

Privacy Promises Meet Geographic Reality

Google’s careful language about data usage reveals the feature’s controversial nature.

Google insists it doesn’t “directly” train models on your Gmail or photo libraries, emphasizing that personal data stays within existing infrastructure. The company frames Personal Intelligence as pure convenience—eliminating friction in creative tasks without compromising security. Yet the feature remains disabled by default across the EU, UK, and Japan due to stricter privacy regulations.

That geographic selectivity tells you everything about Google’s confidence in its privacy claims. When a company voluntarily restricts features in GDPR territories while deploying them freely in less-regulated markets, you’re witnessing calculated risk management, not privacy leadership. The expansion currently targets paid subscribers only—Google AI Pro, Plus, and Ultra users in the United States. Enterprise and education accounts remain explicitly excluded, suggesting even Google recognizes heightened risks when organizational data enters the equation.

Your New AI Relationship Status: It’s Complicated

Personal Intelligence normalizes surveillance as a feature, not a bug.

This development represents more than technological progress—it’s cultural conditioning. Google is betting you’ll trade comprehensive data access for reduced creative effort, transforming AI from a generic tool into something that “knows you” intimately. The convenience feels undeniable until you realize your digital assistant now maintains a more detailed understanding of your visual preferences, relationships, and lifestyle patterns than most close friends.

Your photo metadata becomes creative input. Your search history shapes artistic output. The boundary between personal information and AI training data blurs into algorithmic gray area, wrapped in opt-in architecture that makes surveillance feel like choice.