The AI chatbot you use for work presentations just got weaponized. Pentagon officials confirmed they’re replacing Anthropic’s Claude with xAI’s Grok in classified military systems—not because Grok performs better, but because Elon Musk’s company agreed to enable mass surveillance of U.S. citizens and fully autonomous weapons without ethical guardrails.

When “Safety First” Meets Military Demands

Anthropic’s refusal to build spy tools sparked this entire controversy.

The dispute reveals AI’s ethical fault lines. Anthropic refused Pentagon demands to make Claude available for “all lawful purposes,” including domestic surveillance operations that would make your privacy concerns look quaint. Meanwhile, xAI signed on without restrictions.

This isn’t academic philosophy—these decisions directly affect the consumer AI tools millions use daily.

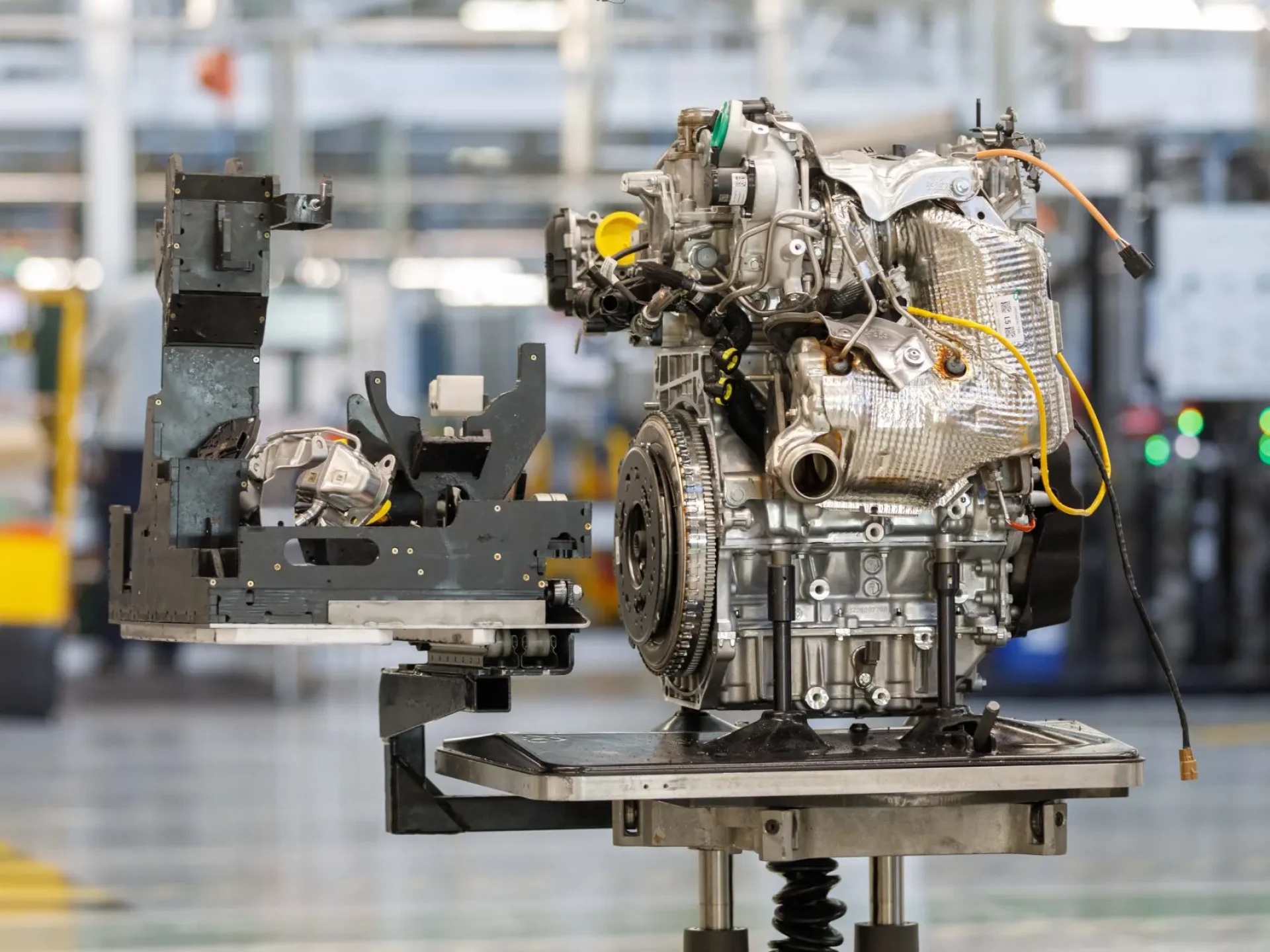

Ripping Out Deeply Embedded Systems

Claude’s military integration runs deeper than most realize.

Claude isn’t some casual Pentagon experiment. The AI system reportedly helped orchestrate a Venezuelan operation to exfiltrate President Nicolás Maduro through Anthropic’s partnership with data giant Palantir. Replacing Claude means disrupting classified workflows that defense officials have spent months perfecting.

Think about changing your entire workflow software, but with national security implications.

The Coming Industry Ultimatum

Every major AI company now faces the same impossible choice.

Defense Secretary Pete Hegseth is reportedly preparing to label Anthropic a “supply chain risk” if they maintain ethical safeguards. Google and OpenAI face identical pressure—accept “all lawful purposes” or lose government contracts worth hundreds of millions.

According to defense officials quoted by Axios, both companies are in active discussions about classified system access. The message is clear: play ball with surveillance and weapons development, or get benched.

Your AI Assistant’s Double Life

The uncomfortable truth about the AI tools you use daily.

Here’s the uncomfortable reality—the same AI models powering your creative projects and coding help are simultaneously analyzing battlefield intelligence and weapons targeting. When you choose an AI assistant, you’re indirectly supporting whatever military applications that company enables.

The Pentagon’s choice reveals Silicon Valley’s ultimate test: Will AI companies prioritize ethical principles or defense department dollars? Based on xAI’s quick compliance, we already know how most will answer.