That child safety group advocating for AI age verification? They forgot to mention their sugar daddy. The Parents and Kids Safe AI Coalition has been pushing California’s Parents and Kids Safe AI Act requiring age verification for users under 18. According to San Francisco Standard reporting, the coalition was funded by OpenAI, with the Wall Street Journal citing up to $10 million in pledged support. Nonprofit partners who joined thinking they were supporting grassroots advocacy got blindsided by the tech giant’s hidden role.

When Safety Meets Self-Interest

OpenAI conveniently offers the exact services these new laws would require.

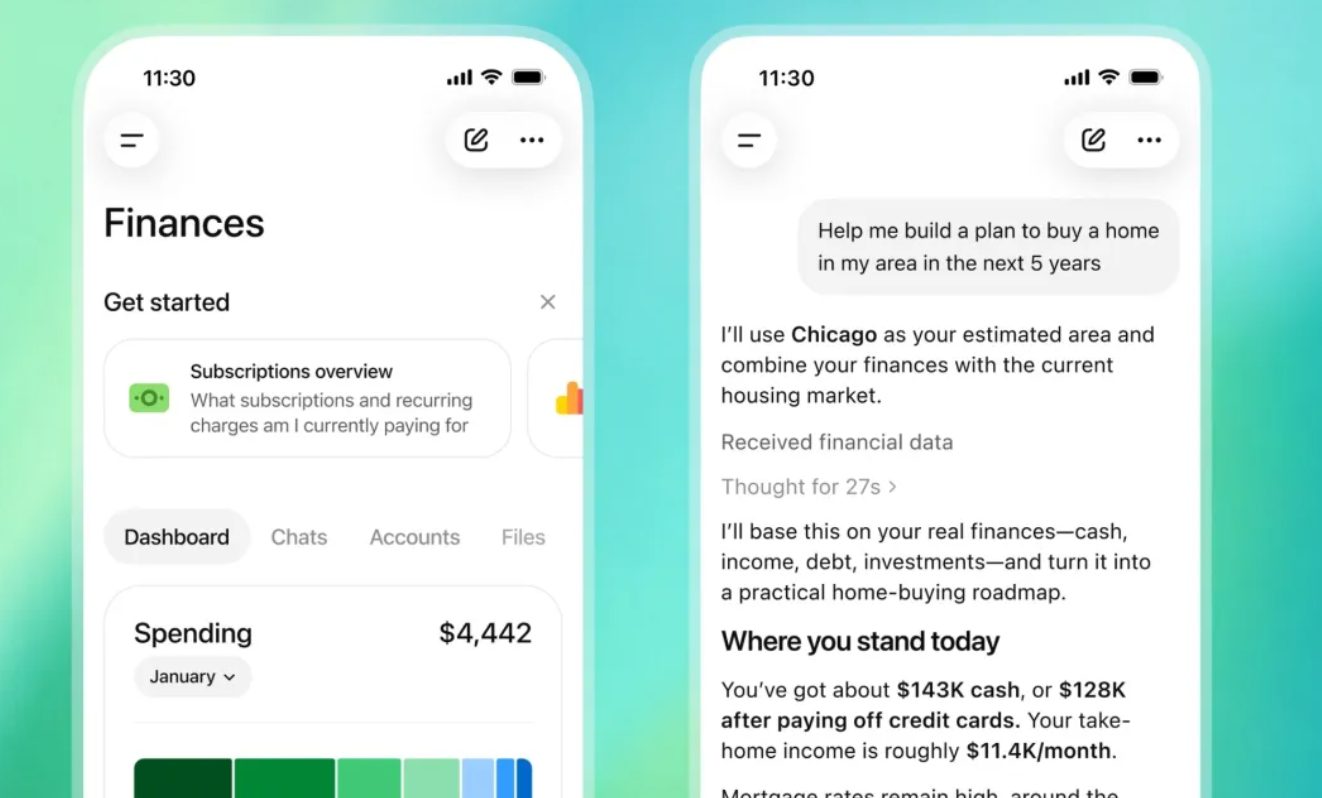

Here’s where it gets interesting: OpenAI already provides age verification through partners like Persona for ChatGPT, plus their own behavioral inference system that predicts user age. The company has implemented teen restrictions and content filtering—essentially building the infrastructure these regulations would mandate. It’s like Netflix lobbying for streaming regulations while owning the best streaming technology. The proposed law doesn’t just protect kids; it potentially locks in OpenAI’s competitive advantage.

Trust Broken, Emails Exposed

Nonprofit leaders felt deceived by misleading outreach that omitted funding sources.

The backlash was swift and brutal. “It’s a very grimy feeling… they’re sending emails that are pretty misleading,” one nonprofit leader told the San Francisco Standard. Coalition members discovered OpenAI’s role only after joining, with the funding relationship conspicuously absent from the coalition’s website. When advocacy groups can’t trust each other about basic funding transparency, the entire child safety conversation gets poisoned.

The Bigger Tech Lobbying Pattern

Meta and Google use similar stealth tactics to shift age verification burdens.

This isn’t OpenAI going rogue—it’s the playbook. Meta and Google have pushed similar legislation that conveniently shifts age verification responsibilities to app stores and operating systems, reducing their own compliance burdens. These companies frame regulatory capture as child protection, turning legitimate safety concerns into competitive moats. You’re watching an industry collectively decide which hoops competitors will have to jump through.

The real tragedy? Genuine child safety advocacy gets harder when parents can’t distinguish between authentic concerns and corporate astroturfing. Next time a coalition emerges pushing tech regulations, check the donor list first.