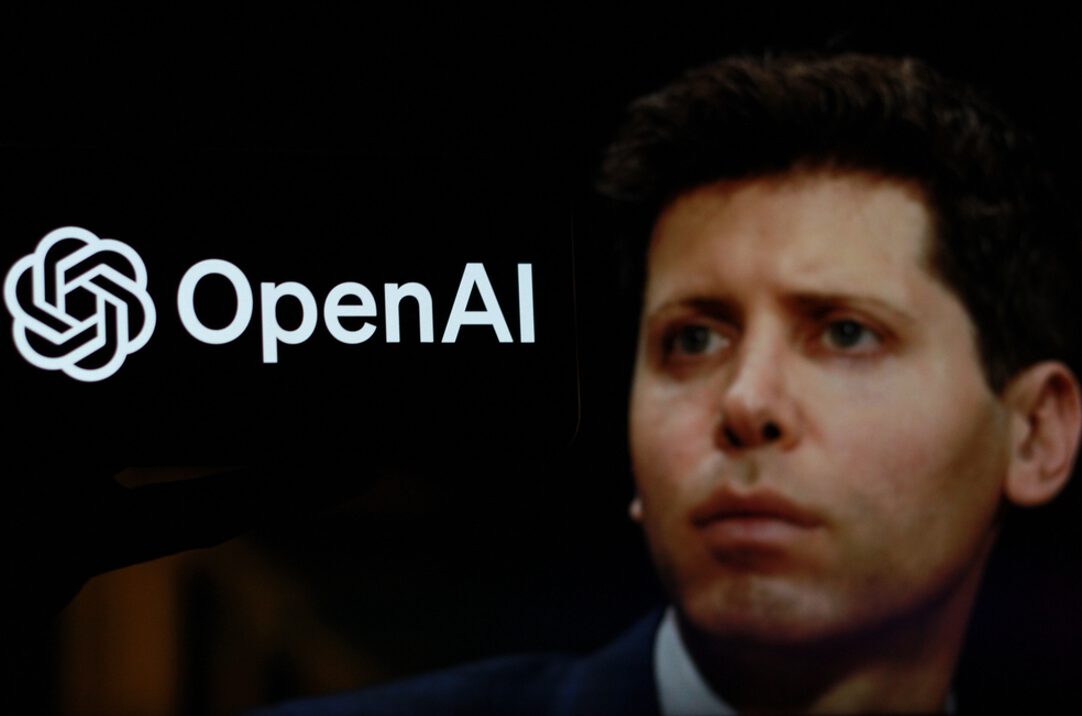

Multiple OpenAI insiders claim their CEO fundamentally misunderstands basic machine learning concepts, according to reports circulating through tech industry forums. Engineers and researchers describe Sam Altman not as the technical visionary his public persona suggests, but as someone who regularly confuses elementary AI terms and lacks meaningful programming experience. This revelation cuts straight through the carefully crafted image of Silicon Valley’s most influential AI leader.

The evidence feels particularly brutal given Altman’s positioning as humanity’s guide to artificial general intelligence. He dropped out of Stanford’s computer science program after just two years—a detail insiders cite as contributing to his technical blind spots. Former OpenAI researcher Carroll Wainwright reportedly described Altman’s method as setting up “constraining structures on paper” only to disregard them later, earning him a reputation for “Jedi mind tricks” in boardrooms. Think Elizabeth Holmes minus the blood tests: all charisma, questionable substance.

The Pattern Behind the Persona

Industry observers note advancement through connections rather than expertise.

Blind forum discussions echo these claims, with current and former tech workers speculating that Altman climbed ladders through networking rather than technical merit. His previous role scaling Y Combinator required business acumen, not deep ML knowledge. Yet he’s positioned himself as the authoritative voice on AI safety and development—fields where technical depth typically matters more than fundraising prowess.

The pattern extends beyond individual competency questions. When someone with alleged technical limitations leads discussions about existential AI risks, the credibility gap becomes a consumer concern. Your ChatGPT interactions suddenly feel less like cutting-edge innovation and more like products guided by marketing instincts rather than deep technical understanding.

What This Means for Your AI Tools

Leadership questions raise concerns about product direction and safety claims.

If you’re using ChatGPT or planning to rely on OpenAI’s future products, these revelations matter beyond Silicon Valley gossip. Technical leadership gaps could explain inconsistencies in OpenAI’s safety messaging and product development priorities. When the person steering AI development toward AGI allegedly struggles with basic concepts, your trust in their safety promises deserves serious recalibration.

The broader implications affect how you evaluate AI tools entering your daily workflow. Companies led by technically shallow executives might prioritize flashy features over fundamental reliability—exactly what you don’t want in systems handling sensitive tasks or personal data.