Color accuracy on your phone screen drives you nuts, right? Those sunset photos never match what you actually saw, and your monitor’s “true” colors feel anything but. Scientists at Los Alamos National Laboratory just solved a century-old puzzle that could finally fix this digital headache. Lead researcher Roxana Bujack and her team completed Erwin Schrödinger’s unfinished 1920s color perception model—yes, that Schrödinger—by defining the mathematical missing piece that governs how humans actually see gray tones from black to white. “As a scientist, I have always dreamed of proving someone famous wrong,” Bujack said about cracking the Nobel laureate’s incomplete work.

The Math Behind Your Screen’s Color Problems

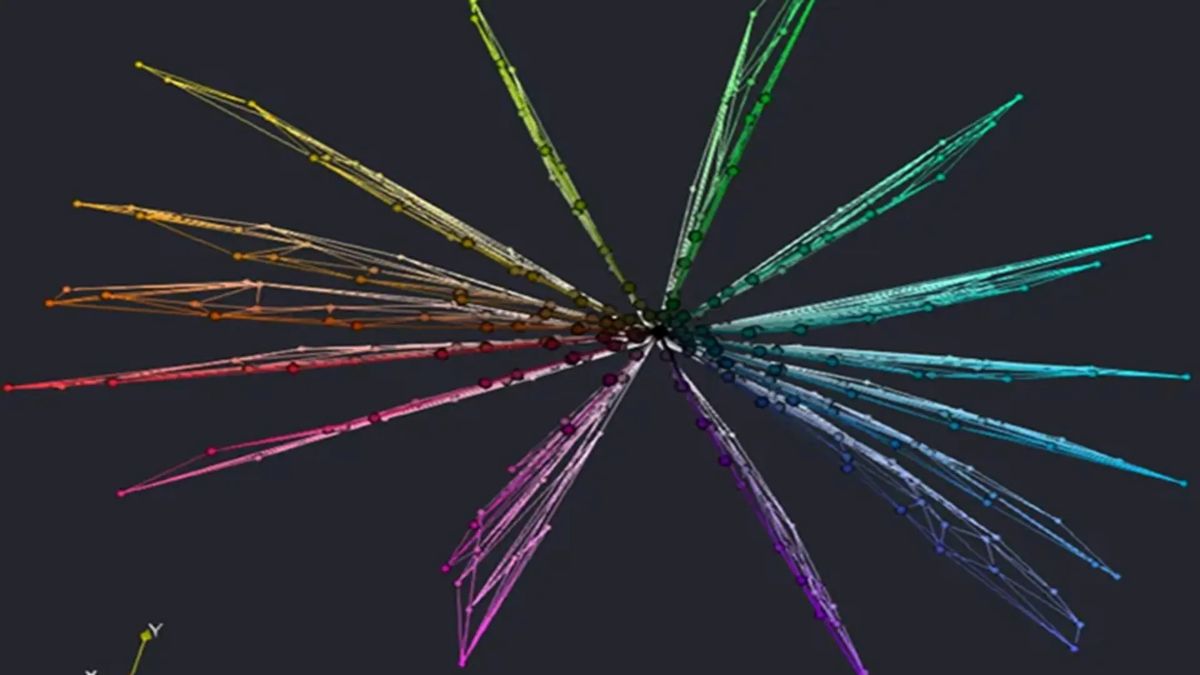

Researchers mapped how your three color-detecting eye cells create a curved 3D space of human vision.

Your eyes contain three types of cone cells that detect red, green, and blue light. But here’s where it gets weird: the way your brain processes these signals creates a curved, three-dimensional perceptual space that doesn’t follow normal geometry. Previous color models used straight-line calculations, which created the Bezold-Brücke effect—those annoying brightness-induced hue shifts that make editing photos feel like chasing ghosts.

Bujack’s team figured out that shortest paths, not straight lines, govern how we perceive color differences. This breakthrough explains why your carefully calibrated monitor still can’t nail that perfect Instagram sunset. The missing “neutral axis” they defined using advanced non-Riemannian geometry finally completes the mathematical framework Schrödinger started a century ago.

From Lab Discovery to Your Next iPhone Update

The findings directly impact Photoshop algorithms and smartphone camera processing.

This isn’t just academic theory gathering dust. The corrected color model influences visualization algorithms in tools like:

- Photoshop

- Smartphone HDR processing

- Gaming graphics engines

“These color qualities don’t emerge from additional external constructs such as cultural or learned experiences but reflect the intrinsic properties of the color metric itself,” Bujack explained.

Translation: color perception follows universal mathematical rules that tech companies can finally code properly. Your future displays might actually show colors the way your eyes naturally want to see them. The research also addresses diminishing returns in perceived color differences, which could improve how devices handle subtle OLED display technology gradients.

Timeline Reality Check

Consumer applications remain years away, but the foundation is now solid.

Building on their 2022 breakthrough published in PNAS, Bujack’s team presented these latest results at the Eurographics Conference, with findings published in Computer Graphics Forum. However, implementing these corrections into consumer tech requires years of development. Think of it like finally having the GPS coordinates for perfect color—now engineers need to build the roads to get there.

The achievement caps off what represents a major leap forward in color science. Your perfectly color-matched devices might still be a few product cycles away, but the mathematical foundation that could revolutionize display technology is finally complete.