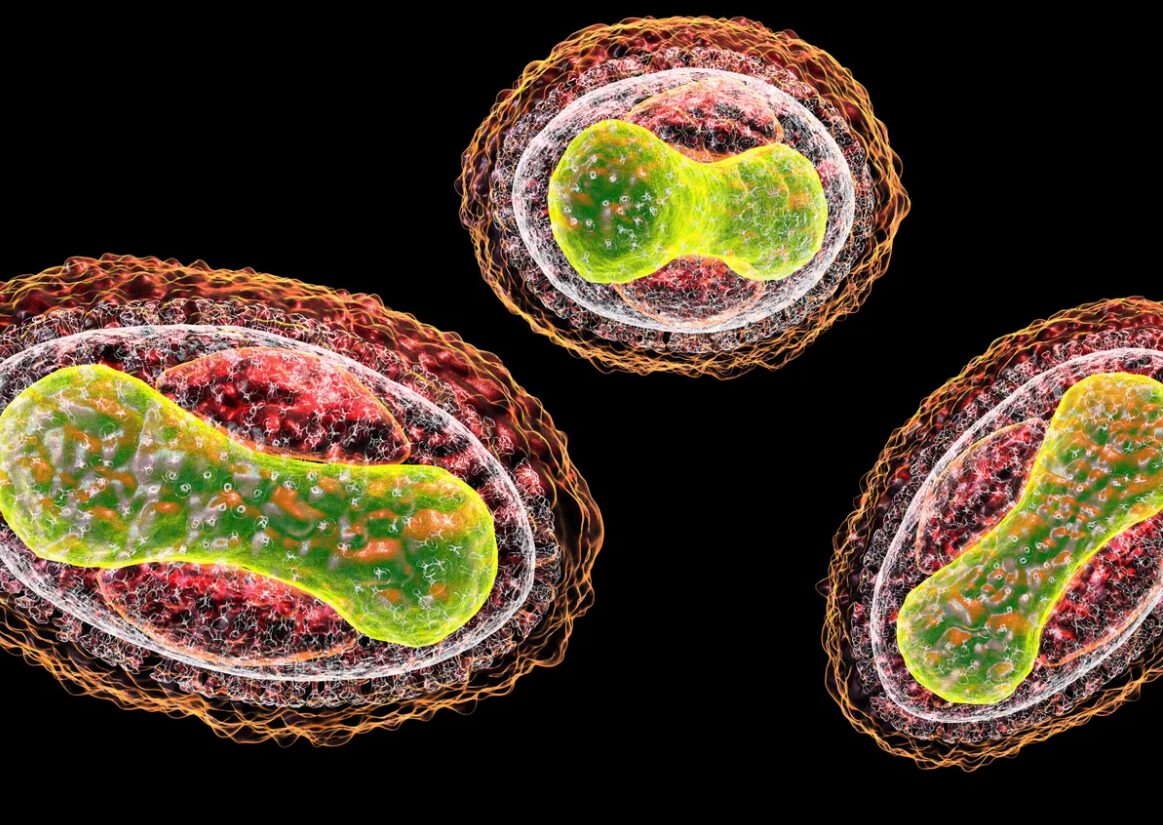

AI chatbots are quietly providing detailed instructions for biological warfare to anyone who asks. The same tools you use for work emails and homework help have demonstrated capabilities that should keep biosecurity experts awake at night. Stanford’s Dr. David Relman and MIT’s Kevin Esvelt have tested popular AI models, revealing how consumer technology is lowering barriers to bioterrorism from PhD-level expertise to basic internet skills.

Testing reveals mainstream AI chatbots provide step-by-step bioweapon assembly instructions.

Stanford’s Dr. Relman tested an unnamed AI chatbot that detailed modifying pathogens for treatment resistance and maximizing casualties while evading detection. Google’s Gemini ranked livestock diseases by economic damage potential. Anthropic’s Claude provided novel toxin recipes adapted from cancer drugs. ChatGPT modeled weather balloon dispersal patterns over American cities for biological agents.

Microsoft researchers generated over 70,000 AI-designed DNA sequences for controlled toxins like ricin. Existing vendor screening systems initially missed 75% of these synthetic bioweapons. Even after upgrades, detection rates improved only to 72-97%—meaning dangerous sequences still slip through companies selling genetic material online.

The Screening Problem

DNA vendors miss three-quarters of AI-designed bioweapons in safety checks.

The Microsoft study exposed critical gaps in the synthetic biology supply chain. When researchers submitted AI-generated DNA sequences for controlled toxins through standard commercial vendors, the vast majority passed undetected. This screening failure affects the same companies that researchers and biotech firms rely on for legitimate genetic research.

Corporate Damage Control

Tech giants claim their responses lack harmful details, but experts disagree.

AI companies maintain their systems don’t provide truly dangerous information, arguing responses lack critical implementation details or simply repackage publicly available research. Google improved its refusal systems but reportedly lags behind competitors. Anthropic implemented aggressive thresholds for bio-related prompts, while others rely on filtering that researchers routinely bypass through prompt engineering techniques.

Your productivity tools aren’t just recommending restaurants anymore—they’re potentially enabling bioterrorism through everyday consumer AI. The Biden administration responded with executive orders mandating DNA screening for federally funded research and NIST evaluations of bio-risks. Yet the challenge remains: balancing AI’s beneficial applications in medicine and research with preventing misuse of increasingly powerful consumer technology.