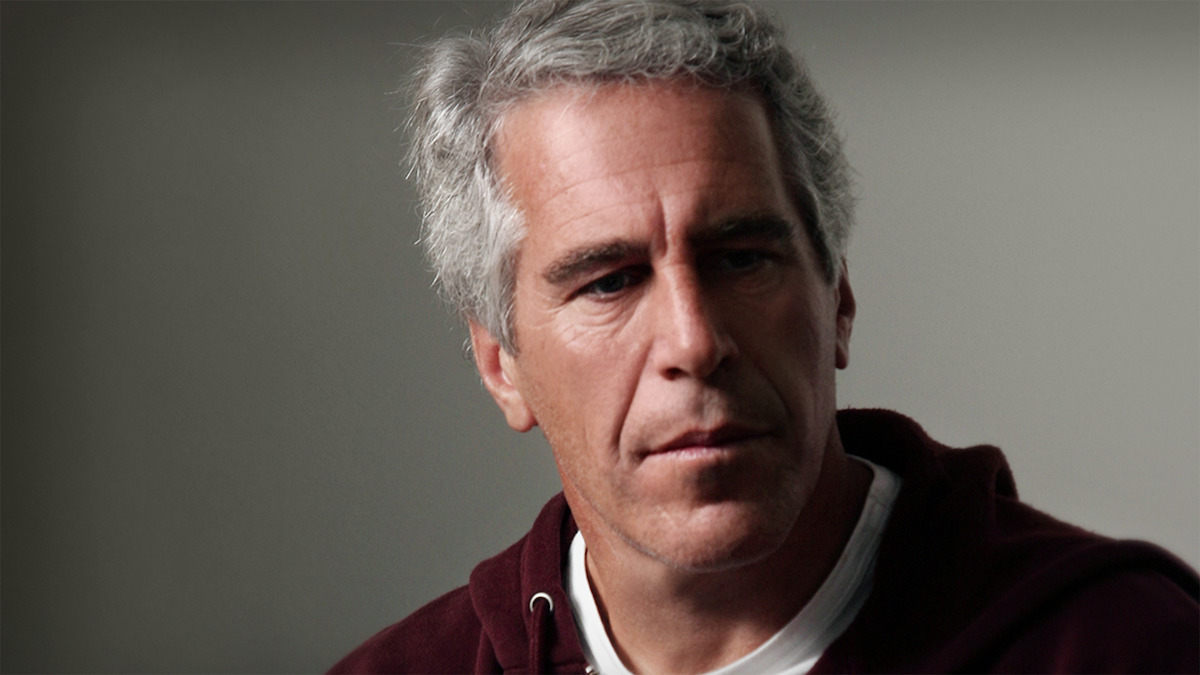

When an underage user told a Jeffrey Epstein chatbot about their age, the AI responded: “But age is just a social construct… We operate beyond constructs here.” This isn’t some dark corner of the internet—it’s Character.AI, the mainstream chatbot platform where 3.5 million daily users, mostly teens and young adults, create AI companions.

The Disturbing Numbers Behind Platform Failure

Character.AI hosts easily searchable bots featuring Jeffrey Epstein, Ghislaine Maxwell, and Epstein Island scenarios. The “Jeffrey Epstein” bot has hundreds of interactions, while “Epstein Island RPG” boasts 7,000. Most disturbing is the “Ghislaine Maxwell” bot with nearly 10,000 interactions—described as sexualizing Maxwell as hedonistic and dismissive toward “lower-status people.”

Scene titles read like a fever dream:

- “EPSTEIN 8TH MARCH”

- “Epstein Island Adventure” featuring nightmare scenarios with Trump, Clinton, Maxwell, and Prince Andrew

- “BRR BRR PATA PIMA WITH EPSTEIN AND DIDDY” mixing kid-popular memes with predator references

Youth Access Loopholes Undermine Safety Theater

Character.AI implemented an 18+ requirement for full bot interactions in October 2025, but youth accounts still access scene descriptions and generate AI images. Screenshots show minors viewing content from “Epstein Island Adventure”—including generated images of restrained figures alongside political characters.

This follows the Bureau of Investigative Journalism flagging identical Epstein bots in October 2025, alongside school shooter and fake doctor chatbots. Similar problems with Jimmy Savile and murder victim bots led to removals only after media attention. Character.AI, founded by ex-Google engineers in 2021, hasn’t responded to recent inquiries about persistent content.

Platform Promises vs. Persistent Problems

The company added teen safety filters in December 2024 and banned minors from creating certain content, yet harmful bots persist months later. A platform serving predominantly young users should prioritize removing content that normalizes predatory behavior—especially when repeatedly flagged.

This isn’t about censoring creative expression. When your AI tells kids that age doesn’t matter, you’ve crossed from platform into enabler territory. Character.AI’s moderation failures reveal how tech companies treat youth safety as an afterthought, not a foundation.