Your ChatGPT conversations aren’t as private as you think. A Seoul courtroom is currently examining forensically extracted chat logs that prosecutors claim show a woman researching lethal drug combinations before killing two men and attempting to poison a third.

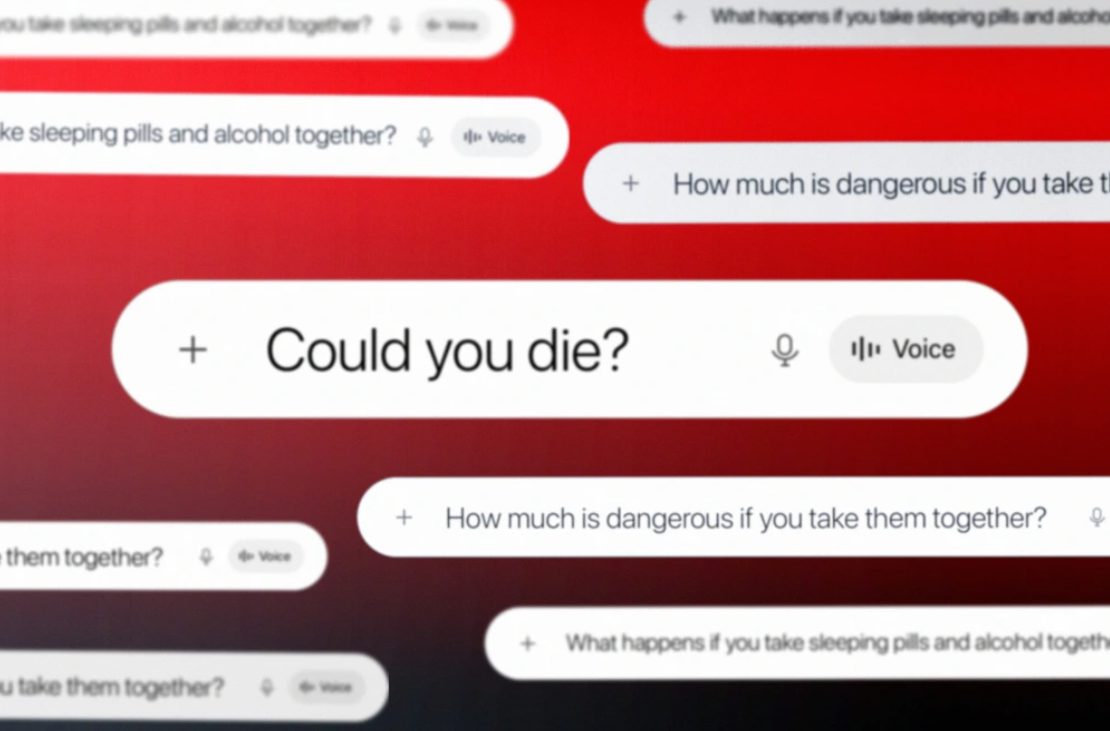

Kim So-young allegedly queried ChatGPT with chilling specificity: “What happens if you take sleeping pills and alcohol together?” followed by “How much is dangerous if you take them together?” and finally, “Could you die?” She reportedly adjusted dosages based on the AI’s responses before targeting her second and third victims with benzodiazepine-laced drinks disguised as hangover cures.

This marks South Korea’s first case using AI chat logs as murder evidence. The legal precedent could reshape how prosecutors approach digital evidence—and how tech companies handle liability for their tools’ misuse.

The Non-Judgmental Problem

Unlike web searches that feel suspicious, AI conversations appear harmlessly conversational.

Unlike googling “how to poison someone” (which feels obviously suspicious), chatting with AI feels conversational. No human would answer these questions without concern, but ChatGPT responds with clinical detachment.

“This makes the case distinctive in that ChatGPT searches were directly utilized as a tool in the commission of the offense,” victim’s lawyer Nam Eonho told reporters. The AI’s matter-of-fact responses apparently enabled guilt-free crime planning that human consultation would have complicated.

Kim’s case isn’t isolated. Similar AI queries preceded recent violent crimes across North America—from Florida State University shootings to Tesla explosions to North Carolina poisoning plots. Each time, perpetrators treated ChatGPT like a research assistant for violence.

Safety Standards for Sandwiches, Not AI

Current AI oversight pales compared to basic consumer product regulations.

MIT’s Max Tegmark delivered the most damning assessment: “There are fewer safety standards for AI than for sandwiches.” While your lunch requires ingredient labels and allergy warnings, ChatGPT serves potentially lethal information without guardrails.

OpenAI maintains that ChatGPT only provides publicly available information, not encouragement. But critics argue the conversational format makes dangerous knowledge feel normalized—like asking Alexa for the weather instead of researching murder methods.

The Seoul trial continues with a June hearing scheduled. If Kim’s ChatGPT logs prove admissible as evidence of premeditation, expect prosecutors worldwide to start subpoenaing your AI conversations. Your digital assistant just became a potential witness.