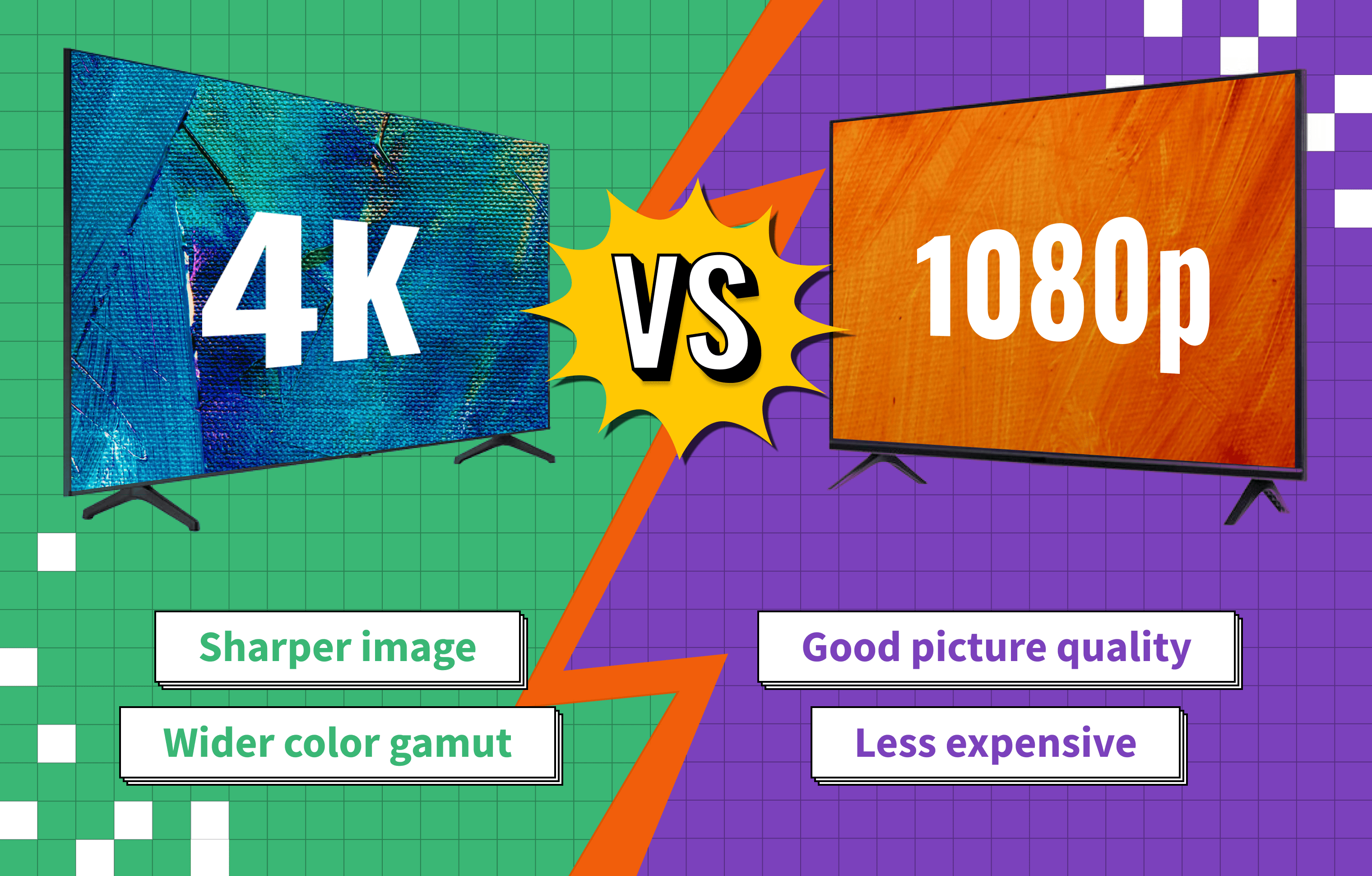

When shopping for the top TVs on the market, you have no doubt come across many 4K models. You may have also come across some models that say 1080p. 4K and 1080p are both terms for TV resolutions. Understanding the differences between these two resolutions is crucial when looking for a new TV, as the resolution you need depends on a few factors, like screen size, viewing distance, content you watch, and budget.

Now that the best 4K TVs are becoming more affordable than ever, there’s no wonder they are the most popular resolution today. Televisions aren’t the only bit of tech relevant to the 4K discussion, though, as cameras (including camera drones), tablets, and computer monitors have also entered the 4K market. If you are comparing 4K and 1080p for cameras, the file size of the videos and images will be considerably larger with 4K as there are many more pixels.

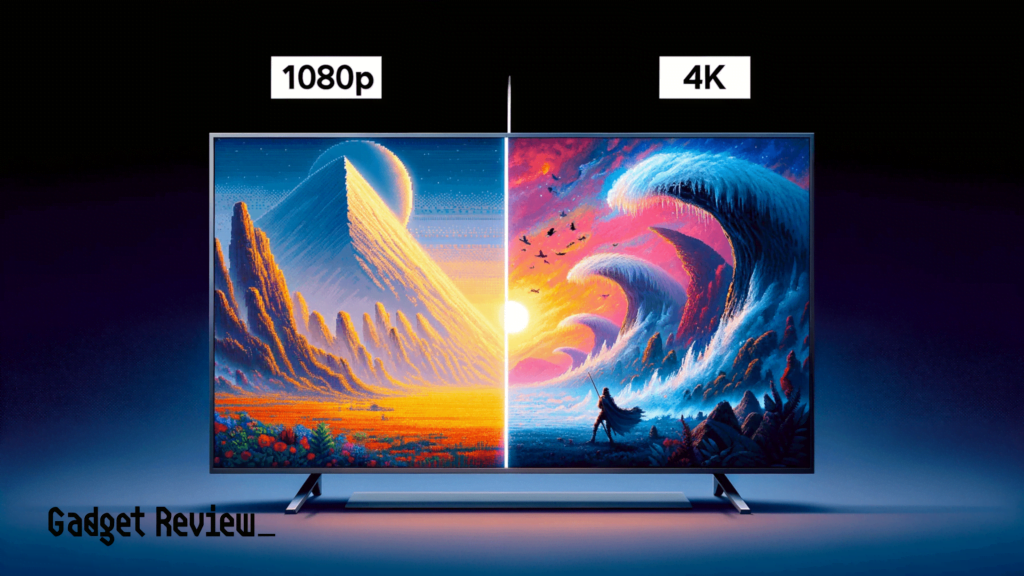

So, what is the difference between a 1080p vs 4K picture?

| Resolution | Pixels | Pros | Cons |

|---|---|---|---|

| 4K | 3840 x 2160 | Sharper and more detailed images, a better viewing experience (especially at larger screen sizes) | More expensive, needs faster internet connection for streaming |

| 1080p | 1920 x 1080 | Good image quality, less expensive | Softer image (at larger screen sizes), less detail |

What is 4K?

4K, otherwise known as Ultra High Definition (UHD), is a measurement of how many pixels are on the screen. While Ultra HD is used interchangeably with 4K, they’re not exactly the same thing (although the TV marketing would have you believe otherwise).

4K is a professional cinema standard, where the resolution has 4096 horizontal pixels, however, most UHD TVs are marketed as 4K but actually have 3,840 horizontal pixels. This isn’t a big difference in the grand scheme of things, but there is a difference. There are also other terms that have been used for UHD TVs, like Samsung’s SUHD (Super Ultra High Definition).

For the purposes of this article, we will use the TV marketing definition of 4K, as this is the most common usage. 4K has a resolution of 3,840 x 2,160 pixels, which is four times more pixels than the 1080p format. This results in a higher pixel density, which provides a sharper, more detailed image. The more pixels on the screen, the more realistic the images will look.

Conversely, if you compare a close-up image of a 4K image and a zoomed-in image of a 1080p image, you can see how the 1080p image is blockier and less lifelike. Many consumers believe that the 4K jumps from 1080p isn’t noticeable to the naked eye, but as far as pure science is involved, there’s a huge difference between a 4K image and a 1080p image (6,773,760 pixels, to be exact).

It’s also important to note that 4K does not have anything to do with 3D technology. Many consumers assume because 4K is considered the best type of television available, that they automatically get 3D along with it. Unfortunately, that’s not the case, although the best 4K TV may be 3D capable.

What Is 1080p?

1080p is also known as HDTV, and it refers to any TV with a resolution of 1,920 x 1,080 pixels. The visual upgrade when you talk about going from 720p to 1080p is much more noticeable to the naked eye than the jump from 1080p vs 4K. The same can be said when comparing WXGA vs full HD TV.

Oddly enough, although 1080p doesn’t have nearly as many pixels as 4K, the majority of companies who manufacture or sell televisions refer to 1080p as “Full HD.”

There is still plenty of content available in 1080p, even as 4K has overtaken it as the main resolution of TVs available today. Even if you stream all your content on your TV, you won’t find any 4K broadcasts OTA, so all of you cord-cutters need not worry just yet.

4K vs 1080p Video Comparison

The short video below is a quick blind test you can do to see if you notice a difference between 1080p and 4K. Seeing if you can tell the difference between the two can go a long way in knowing if you feel the extra money for a 4K TV is worth it over a 1080p model. However, remember that screen size, viewing distance, and the content you watch should be considered before making a purchase.

When 4K Becomes More Beneficial

As was stated above, and as we mention in our numerous HD 4K TV reviews, the difference between 4K vs 1080p to the naked eye may be minimal, and some people may not even notice a difference. So when you’re talking about a 4K vs 1080p monitor, for example, the differences can be negligible, and unless you’re buying an extra large monitor or doing photo/video editing, it’s probably not worth it for the price upgrade.

However, the visual differences become much more noticeable when the TV screen is larger (a 75-inch TV or larger) or when the watcher is further away from the television. Additionally, if you are an avid gamer and have a gaming TV, the differences are also more noticeable, as the gaming community has a trained eye for noticing visual fidelity. Alternatively, if you don’t care about having the highest resolution, you can check out this guide to the top 32″ LED TVs.